Scientific discovery for stockpile stewardship

(Download Image)

(Download Image)

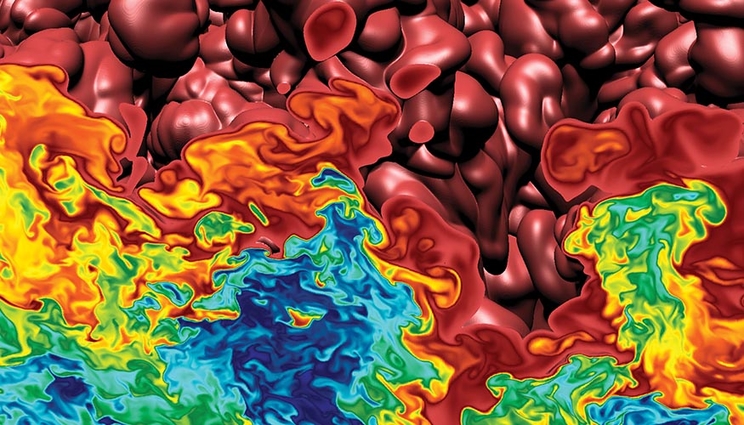

BlueGene/L simulation of turbulent thermonuclear burning in a type Ia supernova. The IBM BlueGene/L ranked #1 on the TOP500 list of most powerful computers from November 2004 to June 2008. Its arrival at the Laboratory marked a turning point in power and speed—and was a boon to stockpile stewardship.

Scientific discovery during the Stockpile Stewardship Program maintains confidence in the nuclear deterrent without testing, brings other benefits

The last nuclear test, code-named Divider, took place 30 years ago, on September 23, 1992. That year, President Bush declared a temporary moratorium on nuclear testing, which became permanent during the Clinton administration. This ending of the era of nuclear testing was also the beginning of stockpile stewardship.

Leaders from the Department of Energy (DOE), and Lawrence Livermore, Los Alamos, and Sandia national laboratories, convened to develop a strategy and map out an R&D effort that would come to be known as the Stockpile Stewardship Program (SSP). Its mission was ensuring the readiness of the nation’s nuclear deterrent force without nuclear tests.

This second article of a series surveys some of the significant scientific discoveries that have helped ensure the reliability of the nation’s nuclear stockpile.

Since the end of World War II, developing new nuclear weapons was a matter of designing, testing and adjusting the design to achieve the desired explosive yield and other performance goals, and testing again. The U.S. conducted 1,054 nuclear tests, more than 1,000 of them at what was then known as the Nevada Test Site, and researchers collected a great deal of data. “There was a lot of data that we didn’t completely understand or have the time to analyze because we had to move on the next test,” says Richard Ward, a physicist and weapon designer who started working at the Lab in 1982.

In 1992, the era of nuclear testing came to an end. Some in the nuclear national security community were surprised, a few were not. Certain researchers had thought about how to continue to design and assure the reliability of nuclear devices if testing ever ended. But most expected the design–test cycle to continue indefinitely. “It never occurred to me at the time that testing might end. I assumed I’d be working on testing my whole career,” Ward notes. But after it did, he and others moved on to new projects as the stewardship program began to ramp up. Earlier in his career, Ward had helped build the Nova laser, a predecessor of today’s National Ignition Facility (NIF), the most energetic laser in the world. He moved on to leadership positions in the Weapons and Complex Integration (WCI) Directorate.

Formally launched in 1995, the SSP included ambitious plans to develop new weapons simulation and non-nuclear testing capabilities. Stewardship science required developing more powerful high-performance computers (HPC), lasers capable of creating the most extreme states of matter on Earth and new technologies to accurately measure particle and radiation intensities and spectra, temperatures, pressures and other quantities at high-energy density. Beyond their use in certifying the stockpile’s reliability without testing, these technologies opened an era of basic scientific discovery that continues to this day.

Brad Wallin, principal associate director for WCI, names six areas of study that exemplify the scientific discoveries important to stockpile stewardship over the past 30 years. “Some of the most important areas have included the precision with which we’ve been able to understand the behavior of plutonium; solving the energy balance problem; discoveries made at the National Ignition Facility; detailed understanding of the chemical behavior of high explosives; advanced manufacturing techniques and new materials; high physics fidelity, and high-resolution simulation codes.”

One example of scientific research that helped stockpile stewardship is work that extended the phase diagram and equation-of-state of plutonium, and other materials relevant to nuclear devices. The equation of state describes how a material, for example, a chemical element like plutonium or a compound such as water, changes in response to changing pressure, volume and temperature. A material’s phase diagram is a map of how its molecular arrangement in space changes under these conditions, and where it exists as a solid, liquid, vapor or plasma. Water changes from solid to liquid to vapor as the temperature rises. As a solid, water ice has more than a dozen molecular arrangements at different temperatures and pressures.

Research performed at Livermore and elsewhere to establish the equation of state and phase changes of plutonium and other stockpile-relevant materials have generated crucial data that have played an important role in improving the computer simulations used to model high-energy density conditions relevant to nuclear devices.

Measuring the compressibility of materials

Rick Kraus, a research scientist in the Physics Division, points out that studies of how materials behave under sudden, extreme compressive stresses have contributed significantly to stockpile stewardship science. Researchers at Livermore and elsewhere have use the diamond anvil cell, which compresses materials to extreme pressures — often in the millions of bars (roughly equivalent to millions of atmospheres of pressure) — to perform these studies, as well as the gas gun, which fires projectiles at targets and rapidly pressurizes the targeted material. A 20-meter-long, two-stage gas gun at the Joint Actinide Shock Physics Experimental Research (JASPER) Facility located at the Nevada National Security Site has provided researchers with a wealth of compressibility data.

With gas gun data, experimentalists measure a material’s Hugoniot. “This is a thermodynamic state that a material, such as a piece of plutonium, reaches when a shock wave is driven through it,” says Kraus. “It’s relatively simple to interpret and provides incredibly accurate baseline information on how a material behaves at high pressure.” Beyond understanding how materials densify with increasing pressure, shock waves can be used to understand the conditions are which materials melt and solidify.

Kraus and colleagues’ measurements of the high-pressure melt curve of metals such as tantalum, copper and iron have provided useful data both to stockpile stewardship, and to the understanding of planetary interiors. Their studies of tantalum have helped reconcile contradictory prior results from different studies of tantalum’s behavior, allowing them to develop a consistent equation of state for that element.

Their measurements collected at NIF on iron experimentally determined the high-pressure melting curve and structural properties of pure iron up to 1,000 gigapascals (nearly 10,000,000 atmospheres), three times the pressure of Earth’s inner core. The scientific results will help researchers understand the conditions under which planets with iron cores form magnetic fields, which help protect biomolecules from cosmic radiation — a condition thought by some to be necessary for life to form on Earth-like planets. Their method of measuring this data will be useful to stockpile-relevant materials.

Working hand-in-hand with experiments, increasingly powerful high-performance computers have allowed Laboratory researchers to model the materials and processes relevant to the stockpile with greater fidelity. “High-performance computing has been critical, and not just the biggest supercomputers, but the workhorse clusters that we use on a day-to-day basis,” said Kraus. “Mathematical methods allow us to accurately approximate solutions to Schrodinger’s equation for many-electron and many-body systems. These approximations provide us with a lot of intuition about how materials like metals, explosives, and insulators behave at extreme conditions.”

Materials aging studies provide physics for models

The aging of plutonium is a concern for stockpile stewardship. Researchers need to understand how plutonium ages, and how the material affects components in nuclear devices’ response to the emitted radiation. Pits, which are shells made of plutonium, are key components in weapons and understanding how they age is important. Plutonium has a half-life of 24,100 years. This is long compared to the age of the stockpile, but the effects on a device’s performance as the pit decays need to be understood. Radiation from the pits could also affects other components.

Researchers have studied the aging of plutonium by implanting samples with helium at the Laboratory’s Center for Accelerator Mass Spectrometry. Experiments such as these provide a useful check on how materials within the stockpile age in place. The decay of Pu releases an alpha particle and produces helium and uranium. As plutonium ages, bubbles of helium form within the material, which researchers must study to determine the resultant influence on the performance of nuclear weapons. Other materials also age and change as they are exposed to radiation.

“As our stockpile evolves and ages, what do we need to know in order to understand how their performance will change?” asked Kraus. “As we look to the future, as we make new materials for the stockpile, I think we’ll be looking to push our fundamental understanding of how materials behave.” Researchers at NIF have developed a program and a facility to continue to explore plutonium’s behavior and equation of state at high-energy density conditions. Plutonium equation-of-state experiments began at NIF in 2019. These studies help researchers understand not only the behavior of materials at these conditions, but also the basic physics of high-energy processes.

LLNL developed capabilities to produce the large diffraction gratings that made the power of the petawatt laser possible. In this image from the 1990s, a technician uses a process called interference lithography in fabricating a grating — an example of advanced materials and manufacturing prowess that contributes to stockpile stewardship.

Solving physics problems at NIF

By subjecting materials to powerful, energetic laser pulses, researchers can replicate, on a small scale, the physical processes such as nuclear fusion taking place within stars or nuclear weapons detonations. “The National Ignition Facility has produced diagnosable thermonuclear explosions in the laboratory and thus gives us the capability of testing codes, and producing data in many relevant physics regimes,” said Omar Hurricane, chief scientist of LLNL's Internal Confinement Fusion (ICF) Program.

“At the time testing ended, we were just beginning to get the kind of data we needed,” said Cynthia Nitta, who started at Livermore as a weapon designer in the 1980s, and today is chief program advisor for Future Deterrent, Weapons Physics and Design. “Some of the diagnostics we had developed for underground nuclear testing eventually ended up being used at the National Ignition Facility,” she noted.

Hurricane joined the Lab in 1998. Beamlet, a successor of the Nova laser, and a single-beam prototype of NIF, had just been shut down. When he arrived, “there were outstanding issues from the testing era that left discrepancies between the results from our models and test results,” he noted. A research group led by Hurricane solved one longstanding issue, known as “energy balance.” This apparent violation of the fundamental physical law of energy conservation in nuclear explosions remained a puzzle, although most of the pieces to a solution had been hypothesized previously by designers. “A 10-year effort of theory, modeling and experiments at NIF eventually resolved energy balance,” said Hurricane, greatly increasing confidence in the modeling.

“For the past 10 years, I’ve been involved in the inertial confinement fusion program. We’ve been able to increase the energy yield of fusion shots at NIF by a factor of 1,000,” he noted. “ICF research is relevant to stockpile stewardship because some of the same pitfalls in models that predict fusion are present in stockpile models…Only by conducting experiments can we calibrate our optimism and do a reality check on what we think we know.” The physics of fusion is one of the grand challenges of science. “A lot of competing physical processes take place during an ICF implosion,” he said. “It throws energy around in many different forms. Being able to predict how much energy is produced, what form it’s taking, and where it’s going, how the energy is partitioned among different physical processes are the challenges...Small errors in the physics of the models can lead to exponentially large effects and errors predicting where the threshold of fusion ignition begins.”

For more than a decade, stockpile stewardship experiments at NIF have been adding to another stockpile — that of data researchers use to adjust and improve their models of nuclear device performance. “The basic value of an experiment is to support or disprove the value of your model,” says Cynthia Nitta. Models furnish some physical insight into how a physical system works, but they are never complete representations of the physics.

NIF researchers are working to replicate the shot of August 8, 2021, which yielded about 70 percent of the input energy — the threshold of ignition. Now that NIF has reached this threshold, stockpile science will benefit from an improved understanding of the fusion regime. “One thing that remains to be gained is a complete understanding of fusion ignition, both experimentally and theoretically. I get the feeling we’ll be making great progress on the challenge within a few years,” said Bruce Goodwin, former principal associate director for WCI.

Energetic materials science contributes new materials

During the nuclear testing era, research in chemical explosives provided knowledge essential to understanding how to use them as part of the nuclear explosive package (NEP). The detonation of a chemical explosive rapidly compresses the fissile material, leading to fission ignition in the pit of a nuclear primary. Insensitive high explosives (IHE) have largely replaced conventional high explosives (HE) in weapons systems and are used in LLNL’s modernization programs. IHEs are more stable, making them a safer and more reliable component in the stockpile. Understanding the chemistry of both types of materials has been a decades-long endeavor, with a substantial role played by research at Livermore’s High Explosives Applications Facility (HEAF) and the Contained Firing Facility (CFF) at Livermore’s Site 300. The focal point of R&D at Livermore is the Energetic Materials Center (EMC). Director Lara Leininger said, “We are developing new molecules to use in the life extension programs and modification programs for weapons systems. Replacing existing materials with insensitive high explosives will make these systems safer and more reliable.” EMC researchers have developed new formulations such as LX-14, -17 and -21, which will be the first new explosives to enter the stockpile without underground testing.

LLNL researchers have also studied detonation processes using the intense, pulsed X-rays at Argonne National Laboratory’s Advanced Photon Source (APS), in a beamline called the Dynamic Compression Sector (DCS). These technologies allow researchers to follow the evolution of an explosive’s detonation on nanosecond timescales and the nanometer length scales.

Livermore’s computer modeling studies of how hot spots and voids grow within HE materials have successfully predicted the shock wave initiation and detonation reaction zone properties of chemical explosives. Researchers have conducted laboratory studies at HEAF to compare model results to physical reality. Hot spots are the local areas of high temperatures in the explosive material where detonations begin. The physical processes of hot spot initiation are not yet fully understood.

Two-dimensional X-ray images of detonations taken at the DCS have allowed researchers to reconstruct their three-dimensional progression over time. Livermore research teams have been able to track the chemistry and formation of carbon condensate particles, such as nanodiamonds and graphite-layered onion-shaped fragments, in the nanoseconds following a detonation. Research explores how a detonation initiates, the chemical reactions that take place during detonation and physical parameters that characterize the detonation, such as the propagation speed, and temperatures and pressures. The results have led to a better understanding of how HEs and IHEs behave within nuclear devices, providing a better measure of device reliability and safety.

Computing science advances enable better models

Physical experiments provide data for stockpile stewardship, but the SSP significantly elevated the importance of modeling and simulation in stockpile certification. Stockpile stewardship efforts began ramping up about the same time that parallel computing began to replace the older technology of expensive, proprietary purpose-built systems. With the change, more powerful supercomputers based on hundreds of thousands of central processing units linked together became the new standard architecture, providing greater computing power at lower cost per unit of processing — but researchers had to develop new approaches to program these systems. A programming problem had to be broken up so that it was distributed among the many CPUs. Communicating data between CPUs became a major concern. “We learned a lot during the years of parallel computing,” said Program Director for Weapon Simulation and Computing Program Rob Neely. “We began writing new codes to take advantage of these parallel architectures.”

Another issue was writing codes so that they could be transferred between different hardware and operating systems. “We learned how to perfect portability abstractions,” said Neely, “which enabled us to port codes from system to system. This allowed us to keep pace with changing technologies.” It also allowed the Laboratory to free itself of dependence on any one vendor, and to scale up simulation codes to extremes.

Beginning with the acquisition of the Sierra machine in 2017, computing researchers have again been challenged with a new change in supercomputing architecture, which is accentuated by the transition to exascale computing. Exascale computers use a heterogeneous architecture in which each computing node combines a CPU with a graphics processing unit (GPU) for faster speeds. Tens of thousands of such nodes are linked, each working on a subset of a simulation. “Current research is focused on how to better schedule jobs on these systems,” explained Neely. “As machines become more complex, we need to think of new ways to schedule workflows efficiently.”

One approach to optimizing software for exascale systems is shifting away from running code as a sole input deck of instructions and toward running ensembles of calculations. A code can run into problems that shut down the simulation, requiring troubleshooting and restart. With an ensemble of calculations running, the code can explore sensitivities to input parameters, provide insight into where the uncertainties in the calculations are, and where those uncertainties are great enough to distort the simulation. This process of uncertainty quantification (UQ) helps identify which areas of a model need to be better constrained through experiment.

Looking farther ahead, the big question, still unanswered, is what will the generation of supercomputer after exascale look like? “We’re deep in these discussions about what’s next now,” says Neely. “They could include accelerators for artificial intelligence, where a lot of innovation is happening in the computing industry right now that could be integrated into our larger supercomputers — something we are discussing with multiple vendors.”

Advanced materials and manufacturing promise greater efficiency

The science performed at research facilities and simulations developed with HPC help researchers better understand the behavior of the stockpile, but neither of these capabilities can manufacture parts for stockpile life-extension and modification programs (LEPs and Mods). The Laboratory’s advanced materials and manufacturing (AMM) research is providing the technologies to fill this essential role in stockpile stewardship. With many LEPs and Mods underway throughout NNSA’s nuclear security enterprise (NSE), and many of the original manufacturing processes and materials now more than a half-century old or obsolete, newer, better materials, and efficient, lower-cost and more sustainable manufacturing processes are needed.

“Much of what’s in the complex is old technology and legacy material,” says Chris Spadaccini, leader of the Engineering Directorate’s Materials Engineering Division. “These technologies can’t be serviced well, they were designed for larger-scale production than we need today, they are expensive, not agile and adaptable, and take up a large space footprint.” Some of the materials used in the past are no longer available today.

“Today, we need to be able to produce better-performing, longer-lasting materials at a faster timescale, reduced materials waste, and a smaller footprint — we don’t need to produce as much as we once did. The time to modernize is now,” he said.

The Laboratory’s AMM research began in Laboratory Directed Research and Development (LDRD) Program projects and has continued as a Director’s Initiative. Today, AMM is also one of the Laboratory’s seven core competencies. Its research in a variety of manufacturing processes is starting to contribute to a revitalized materials manufacturing capability across the NSE. “Additive manufacturing is the hot topic,” said Spadaccini, “with activity in many areas. These technologies offer faster production timescales, improved ability to design complex parts, and faster qualification of parts for use in the stockpile.” Spadaccini also sees another advantage coming: with new materials replacing obsolete ones, researchers have the chance to test and observe these materials and determine how well they perform. A large database of materials behavior brings data science into the work, and allows researchers to begin to apply artificial intelligence and machine learning tools (AI/ML) to design new materials and speed up manufacturing processes.

Cognitive simulation will accelerate stewardship

Simulations are not perfect representations of experiments. Researchers make approximations in these models, to compensate for the missing and unknown physics. To improve simulations, Livermore researchers have incorporated artificial intelligence deep-learning models to make a superb surrogate that can reproduce the model’s software code’s output. But the modeling code still cannot predict an experiment such as a NIF laser shot because of the missing physics.

In cognitive simulation, researchers are developing AI/ML algorithms and software to retrain part of this model on the experimental data itself. The result is a model that “knows the best of both worlds,” says Brian Spears, a physicist at NIF and leader of the Laboratory’s Director’s Initiative in cognitive simulation. The model now ‘understands’ the theory from the simulation side and makes corrections to align with experimental data. The new improved model is a better reflection of both the theory and the experimental results.

Cognitive simulation researchers are already building its methods into ICF simulations, stockpile stewardship work and bioscience R&D. Spears sees a place for it throughout the Laboratory’s missions. “Laboratory researchers will use these tools to design new molecules and materials vital to national security priorities,” he said. “Cognitive simulation-based design exploration tools will help them accelerate development of technologies used in the nuclear stockpile. Manufacturing tools will help increase the speed and efficiency of manufacturing parts for the stockpile and reduce materials waste.”

Laboratory scientists will apply cognitive simulation to its stockpile stewardship and other missions through DDMD: discovery, design exploration, manufacturing and certification and deployment and surveillance. Researchers will design new molecules and materials vital to national security priorities. Exploration tools will help them accelerate development of technologies used in the nuclear stockpile. Manufacturing tools will help increase the speed and efficiency of manufacturing parts for the stockpile, reduce materials waste, and certify parts for use. “With these tools, we will answer the question ‘How can I design a material that performs well in the component I’m building and is also easy to manufacture?’” said Spears.

Ultimately, it’s people

Stockpile stewardship science has provided the data, the discoveries and the developments in technology that have allowed the labs to assess with high confidence the condition of the stockpile from one year to the next and certify life-extension and modernization programs upon completion. Ultimately, it is the stockpile stewards, its people, who make stewardship possible.

Advances in science have contributed to stockpile stewardship, helping to increase confidence in the performance of the stockpile. The final article in this series will look at the people of stockpile stewardship, and how the work has evolved and may evolve as the mission advances.

-Allan Chen

______

LLNL-MI-840097

Contact

Michael Padilla

Michael Padilla

[email protected]

(925) 341-8692

Related Links

Stockpile stewardshipTags

ASCPhysics

HPC, Simulation, and Data Science

Computing

Engineering

Global Security

Physical and Life Sciences

Threat preparedness

Nonproliferation

Science

Strategic Deterrence

Featured Articles