High-performance computing, AI and cognitive simulation helped LLNL conquer fusion ignition

(Download Image)

(Download Image)

LLNL researchers are integrating technologies such as the Sierra supercomputer (left) and NIF (right) to understand complex problems like fusion in energy and the aging effects in nuclear weapons. Data from NIF experiments (inset, right) and simulation (inset, left) are being combined with deep learning methods to improve areas important to national security and our future energy sector. Image by Tanya Quijalvo/LLNL.

Part 10 a series of articles describing the elements of Lawrence Livermore National Laboratory’s fusion breakthrough.

For hundreds of Lawrence Livermore National Laboratory (LLNL) scientists on the design, experimental, and modeling and simulation teams behind inertial confinement fusion (ICF) experiments at the National Ignition Facility (NIF), the results of the now-famous Dec. 5, 2022, ignition shot didn’t come as a complete surprise.

The “crystal ball” that gave them increased pre-shot confidence in a breakthrough involved a combination of detailed high-performance computing (HPC) design and a suite of methods combining physics-based simulation with machine learning. LLNL calls this “cognitive simulation,” or CogSim.

The detailed HPC design uses the world’s largest supercomputers and its most complicated simulation tools to help subject-matter experts choose new directions to improve experiments. CogSim then employs artificial intelligence (AI) to couple hundreds of thousands of HPC simulations to the set of past ICF experiments.

These CogSim tools are providing scientists with new views into the physics of ICF implosions and a more accurate predictive capability when considering parameters such as laser energy and target-design specifications.

“It’s almost like looking into the future, based on what we've seen in the past about what might happen,” said Brian Spears, LLNL’s deputy modeling lead for ICF. “Our traditional design tools and experts say, ‘These are the knobs that you should adjust,’ and then the new CogSim tools say, ‘Given those adjustments and patterns from prior experiments, that looks like it's going to be really successful.’”

To produce fusion, NIF’s 192 high-powered lasers are concentrated inside a pencil-eraser-sized cylinder called a hohlraum containing a tiny BB-size target encapsulating heavy forms of hydrogen — deuterium and tritium (DT). The lasers’ intense heat creates X-rays inside the hohlraum, causing the capsule to implode and the hydrogen atoms to fuse, releasing neutrons, alpha particles (helium nuclei) and other forms of energy.

If conditions of implosion symmetry, heat and compression are just right, as they were on Dec. 5, the self-sustaining fusion reactions in the resultant plasma — the same process that powers the sun and stars — reach temperatures hot enough to overcome any cooling effects and can briefly generate more energy than the laser delivered to the target. This “break-even” point is known as ignition.

Because of the cost and complexity of ICF experiments at NIF, LLNL researchers rely heavily on HPC modeling and simulation to design new high-performing and symmetrical implosions and predict results in advance. Developed at LLNL over the past several years, CogSim methods such as deep neural networks and “transfer learning” — which conveys knowledge gained from solving one problem to a different, related problem — can learn from multiple fusion experiments at NIF, improving in accuracy as more data is acquired. And the methods are spreading across the Lab’s core mission areas.

“The CogSim tools are adding to our programmatic toolbox, giving us new methods to measure our uncertainties and helping us combine experiments and simulation in new ways,” said physicist Richard Town, who leads the ICF Science program.

Swinging for the fences

CogSim has grown into an important tool for the ICF program, providing detailed post-shot analyses of NIF experiments and helping to quantify sources of degradation that the DT target experiences during a shot, such as implosion asymmetries and the unwanted mixing of materials caused by tiny defects on the capsule’s surface, according to Kelli Humbird, NIF design physicist and CogSim researcher.

“Our team has been pioneering the use of AI and CogSim in ICF and high-energy-density research for several years,” Humbird explained.

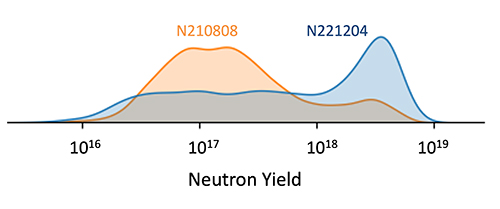

This graph shows the CogSim model’s predicted probability distributions for neutron yield for experiments on Aug. 8, 2021, (labeled N210808 in orange) and Dec. 5, 2022 (labeled N221204 in blue). The N221204 design had a significantly higher chance, at 50.2%, of achieving ignition when compared to the previous record experiment, N210808, at 17.3%. Image by Kelli Humbird/LLNL.

Applying CogSim techniques to ICF research on HPC machines — including LLNL’s flagship Sierra and its unclassified companion Lassen, the Lab’s Jade, Magma, Corona and Pascal systems, and Trinity at Los Alamos National Laboratory — has resulted in faster, better-performing models that can predict outcomes with higher confidence than simulations alone, researchers said.

While Spears cautioned this predictive capability “doesn’t mean that you hit a grand slam every time and these techniques still have much to prove,” it does give researchers a good idea of whether the next ICF experiment will be a home run or a strikeout.

“After the traditional design work and subject-matter experts tell the team what changes to make, we can expose that new design to expected real-world variations to ask, ‘Is this going to stand up to the conditions of NIF?’” Spears said. “The new thing that CogSim methods bring is a more quantitative understanding of which physics degradations are at play and the way they’re correlated. It essentially says, ‘Look, I’ll tell you the probability of whatever physics quantity you want to know.’”

After LLNL’s promising record-breaking shot in August 2021, which yielded 1.35 MJ of fusion energy and put LLNL on the threshold of ignition, the CogSim team “did something a little different,” Humbird said. Based on data from a series of “repeat” experiments with the same target design, the team discovered ways to quantify how a given target design’s performance could vary from shot to shot, a difficult prospect using only traditional design work because targets and laser energy delivery differ with each shot.

By leveraging large ensembles of the hydrodynamics simulation code HYDRA and statistical inference methods, the team modeled degradations the target could be exposed to during the experiment and how they might affect the energy yield.

In September 2022, LLNL teams began a new ICF campaign with an upgraded capability — bumping the laser’s energy up from 1.9 megajoules to 2.05 MJ. LLNL’s design teams, led by Annie Kritcher, modeling lead for integrated experiments, devised ways to improve on the early results and effectively use the additional laser energy. Simulations run using HYDRA showed Kritcher that making the surface of the target capsule — called the “ablator” — thicker would create a more favorable “hot spot” in the implosion and reduce contamination from outside the target.

The integrated design team, which passed along its target specifications to the CogSim team, who ran a suite of tools: Bayesian inference, neural networks and transfer learning. By integrating the modified design, higher laser energy and adjustments to implosion symmetry with a wealth of knowledge from past NIF experiments, then applying the degradation distribution that it learned from previous shots, the team predicted how the new design would react to the conditions.

Based on the higher-energy laser shots and the integrated HPC-based design adjustments, LLNL scientists were “already ramping up for something to happen” when the CogSim team completed its analysis in late November, Spears said.

The models showed the probability for exceeding “break-even” (more energy out than laser energy in) was essentially a coin flip, just a shade over 50%, with a projected yield of two to three times more than the record 2021 shot. The team produced graphs of the distribution probabilities and presented them to Lab senior leadership a few days before the experiment.

“We got an answer that said, ‘OK, this design shrugs off lots of things that looked damaging to previous designs, so it's far more robust,’” Spears said. “When we asked the model the important question — ‘How likely is it that we’ll get more energy out than the laser put in?’ — for the first time ever, the answer was more likely than not. When we saw that, it felt very significant. This was the first time that the models, the experiments, the expert design sensibility and our CogSim techniques were all saying this was going to be a big deal. It just felt like a green light popped on the dashboard.”

Humbird called the predictions “exciting and a little nerve-wracking”.

“This was the first time we’d had such a credible, as in, a highly data-informed, with uncertainties that were well quantified, [ignition] prediction ahead of time,” Humbird said. “We really hoped we would be correct.”

Expectations ran high for the shot, which took place a little after 1 a.m. on Dec. 5, 2022. More than 1,000 scientists in total eagerly waited for the preliminary yield numbers to roll in. Sure enough, it played out as the models had predicted, exceeding the National Academy of Sciences definition of ignition — 2.05 MJ of laser energy went in, and 3.15 MJ of fusion energy came out — a net energy gain of about 150 percent.

Spears awoke early and was checking emails by 6 a.m. He spotted a message from lead experimentalist Arthur Pak, who had been reviewing initial counts of neutrons produced by the experiment.

“We had a CogSim prediction sitting there with a probability distribution over the yields, and the number popped up,” Spears recalled. "In a flash, I could see that it was in the right-hand tail of that 50% probability region. I was more or less paralyzed for a few seconds — I had to look at the exponent and the number to figure out if it actually said what it looked like it said — that it was really what our whole design team predicted and hoped for, and to make sure we weren’t making a mistake or that it was an order of magnitude lower than what it was. Then I was going to pop the champagne.”

Thrilled but cautious, Spears and Humbird began exchanging texts and plotted the initial data onto their laptops.

“It takes you a minute to do the quick mental math to convert [the neutron count] to the approximate energy in megajoules,” Humbird said. “Once I did that, I thought, ‘Oh man, this one might be break-even.’ The data is preliminary though, so you don’t want to get too excited right away, but the measurements were falling right within our expectations.”

The real celebration would have to wait a week for the peer-review process and experts inside and outside the Lab to complete their verifications. After checking and re-checking neutron counts and reviewing data from independent diagnostics, the U.S. Department of Energy (DOE), the National Nuclear Security Administration (NNSA) and LLNL announced the accomplishment to the world on Dec. 13.

For members of the CogSim ICF team — which also includes researchers Luc Peterson, Jim Gaffney, Rushil Anirudh, Ryan Nora, Eugene Kur, Peer-Timo Bremer, Brian Van Essen, Michael Kruse and Bogdan Kustoswki — ignition is just the beginning.

“The tools are really starting to reach a level of maturity that’s making them practical for use on the timescale consistent with the NIF shot rate,” Humbird said. “With the upcoming arrival of [the exascale supercomputer] El Capitan and the corresponding increase in compute power, we see these tools playing a pivotal role in ICF design exploration and optimization. And of course, one good prediction doesn’t validate a model. We’re hoping to do this again for the next several experiments throughout 2023 and really put our tools to the test.”

An expanding role

For LLNL, ignition is a testament to decades of tireless work by hundreds of scientists, engineers, designers and modeling/simulation teams in laser-driven fusion. For Spears, it also represents a culmination of his 18 years in data science for ICF and the CogSim approaches he and LLNL Deputy Associate Director for Computing Jim Brase have co-developed over the past six years. And ignition lends credence to the use of CogSim in other Lab efforts including stockpile stewardship, “self-driving” lasers and predictive biology.

The Cognitive Simulation Institutional Initiative, led by Spears, is funded through the Laboratory Directed Research and Development (LDRD) program. The initiative is part of a broader effort by DOE and NNSA to incorporate emerging AI and machine-learning techniques into mission-relevant projects, with a goal of advancing AI technologies and computational platforms to improve scientific predictions.

The same predictive CogSim capabilities used to drive NIF are being applied by Spears, Timo Bremer in the Center for Applied Scientific Computing, and Tammy Ma, lead for LLNL’s Inertial Fusion Energy Institutional Initiative, to invent new methods for “self-driving” laser operations.

Ignition is still just a first step toward a fusion-energy future that scientists hope will become much cheaper, easier and more efficient over the coming decades. To develop feasible fusion power plants, the facilities will need to accomplish ignition many times per second, making a high repetition rate critical, according to scientists.

Using CogSim tools, researchers could perform fusion experiments, compare them to simulations from real-time data and decide autonomously and on-the-fly how the next experiment should run while performing the necessary adjustments in a matter of milliseconds, far faster than any human could, Spears said.

CogSim also is being used for molecular design across many Lab core mission areas, including biodefense, public health, advanced materials and manufacturing.

According to Brase, the tools are improving simulations of cancer-causing protein interactions in pilot projects with the National Cancer Institute (NCI) and for the Accelerating Therapeutic Opportunities in Medicine consortium — with NCI’s Frederick National Laboratory for Cancer Research and the University of California, San Francisco — leading to better efficacy and safety predictions for new molecules and targets for drug development.

And LLNL’s Program Lead for Predictive Design of Biologics Dan Faissol and his team are finding success in optimizing designs for antibody therapies for the evolving SARS-CoV-2 virus and its many variants.

Brase began coupling machine learning with simulations a decade ago, beginning with cybersecurity applications. He connected with Spears to bring the ideas to ICF research and expanded CogSim to biology through the Biological Applications of Advanced Strategic Computing (BAASiC) initiative. Brase said CogSim has improved science across the Lab by providing faster, bigger and more-efficient models, enhancing predictive power and “steering” simulations to accomplish desired objectives.

In the case of molecular drug design, CogSim is deployed in a multiscale approach, where machine-learning models select areas of interest in macroscale simulations of protein-lipid interactions for further inquiry at a more detailed atomistic level. The approach allows researchers to lengthen the simulated time duration of the interactions by a factor of a million — from nanoseconds to milliseconds — to better examine biological interactions.

Additionally, CogSim can improve a model’s predictive power when experimental data is limited, Brase said. In drug design, CogSim tools can learn from the protein binding of similar molecules to predict binding of novel molecules using only a few experimental measurements. AI models also can direct the overall design process by proposing variations on the molecular structures for the next optimization round.

“Not only can we use CogSim to steer simulations, but we can also use it to determine the best experiments to do to reach a design or modeling goal,” Brase said. “Essentially, we use the CogSim model to design the next experiment, then bring that data back into the models to improve their performance and then repeat the cycle. This ‘active learning’ loop will enable integrated computing and lab automation to make the whole process of building models and designing complex systems for biology or energy ‘self-driving.’ This is an exciting frontier and an area we’re focusing on for the future.”

As CogSim matures and LLNL scientists look to incorporate AI for science methods even more in the coming years, Spears envisions an acceleration in scientific discovery never imagined before.

“It’s great what we just did [with ignition], but the way that this Laboratory operates is that we’re looking 10 years into the future, and we can already see what the next 10 years are,” Spears said. “It’s doing this thing [ignition] that we did once but doing it faster and in a self-driving way that is so quick that discovery happens. It feeds scientists and engineers with the things that they need to know, without them having to labor over setting up the diagnostics. These kinds of things become automated in a way that frees us up to think faster and dream bigger.”

- See Part 1: Star power: Blazing the path to fusion ignition

- See Part 2: Designing for ignition: Precise changes yield historic results

- See Part 3: Ignition experiment advances stockpile stewardship mission

- See Part 4: Laser focused: Power and finesse drove fusion ignition success

- See Part 5: NIF’s optics meet the demands of increased laser energy

- See Part 6: Computing codes, simulations helped make ignition possible

- See Part 7: Diagnostics were crucial to LLNL's historic ignition shot

- See Part 8: Target evolution is a key to LLNL's continued success

- See Part 9: NIF sustainment: Ensuring the next 20 years of progress

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

HYDRAAccelerating Therapeutic Opportunities in Medicine

Biological Applications of Advanced Strategic Computing

Tags

ASCHPC, Simulation, and Data Science

Computing

Lasers and Optical S&T

Lasers

National Ignition Facility and Photon Science

Featured Articles