LLNL scientists eagerly anticipate El Capitan’s potential impact

(Download Image)

(Download Image)

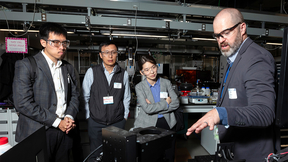

Lawrence Livermore National Laboratory researchers recently used El Capitan's “early access” system (EAS) rzVernal to run a MARBL 3D ALE radiation-hydrodynamics simulation that modeled a laser driven high-energy density experiment done at the Omega Laser Facility. The researchers were impressed to discover the code, which was developed for Sierra, ran well on the EAS machine without any additional changes. The model used 80 MI250X GPUs and consists of 600 million quadrature points. Visualization courtesy of Rob Rieben and Tom Stitt/LLNL.

While Lawrence Livermore National Laboratory is eagerly awaiting the arrival of its first exascale-class supercomputer, El Capitan, physicists and computer scientists running scientific applications on testbeds for the machine are getting a taste of what to expect.

“I'm not exactly sure we’ve wrapped our head around exactly about how much compute power [El Capitan] is going to have, because it is so much of a jump from what we have now,” said Brian Ryujin, a computer scientist in the Applications, Simulations, and Quality (ASQ) division of LLNL's Computing directorate. “I'm very interested to see what our users will do with it, because this machine is going to be simply enormous.”

Ryujin is one of the LLNL researchers who are using the third generation of early access (EAS3) machines for El Capitan — Hewlett Packard Enterprise (HPE)/AMD systems with predecessor nodes to those that will make up El Capitan — to port codes over to the future exascale system. Despite being a mere fraction of the El Capitan’s size and containing earlier generation components, the EAS3 systems rzVernal, Tenaya and Tioga currently rank among the top 200 of the world’s most powerful supercomputers. All three contain HPE Cray EX235a accelerator blades with 3rd generation AMD EPYC 64-core CPUs and AMD Instinct MI250X accelerators, identical nodes to what comprises the Oak Ridge National Laboratory's Frontier system that holds the No. 1 spot on the Top500 List and the title of the world’s first exascale system.

By incorporating next-generation processors — including AMD’s cutting-edge MI300a accelerated processing units (APUs) — and thousands more nodes than the EAS3 machines, El Capitan promises more than15 times the peak compute capability on average over LLNL’s current flagship supercomputer, the 125-petaflop IBM/NVIDIA Sierra, surpassing two exaFLOPs (2 quintillion calculations per second) at peak.

“El Capitan has the potential of enabling more than 10x increase in problem throughput,” said Teresa Bailey, associate program director for computation physics in the Weapon Simulation and Computing program. “This will enable 3D ensembles, which will allow LLNL to perform previously unimagined uncertainty quantification (UQ) and machine learning (ML) studies."

For months, Ryujin has been running the multi-physics code Ares on the EAS3 platforms, and if the code’s performance to date is any indication, El Capitan’s advantages over Sierra might be nothing short of astronomical.

“Having a very healthy amount of memory gives us a lot more flexibility on how we run calculations and really opens up the possibilities for bigger and more complex multi-physics problems,” Ryujin said. “The really exciting thing is that we're going to be able to run much more efficiently on El Capitan. I expect El Capitan to be used for great multi-physics problems, in addition to kind of the bread-and-butter calculations that we've been doing on Sierra.”

LLNL Weapons and Complex Integration (WCI) computational physicist Aaron Skinner and ASQ computer scientist Tom Stitt have been using rzVernal to run MARBL, a multi-physics (magneto-radiation hydrodynamics) code focused on inertial confinement fusion and pulsed power science. As one of the newer codes at LLNL, researchers are developing many aspects of MARBL, including adding more modeling capabilities and making it perform on El Capitan and other next-generation machines.

Skinner said he and other physicists are doing a large number and variety of “highly turbulent” physics calculations on rzVernal that are extremely sensitive to very small spatial scales, hence the ability for increased resolution and higher dimensionality are highly desired. The EAS machine’s expanded memory has at least doubled MARBL’s performance compared to Sierra on a per-node basis. Additionally, the ability to “oversubscribe” GPUs (assign multiple tasks to single GPUs) has resulted in additional performance increases, according to Stitt.

“The huge increase in available memory, which was a bottleneck on Sierra, and the more powerful GPUs, is really exciting,” Stitt said. “Even though rzVernal is a small machine, it has eight times the memory per node, so we can run a very big experiment on a small number of nodes and get that allocation a lot more easily. The simulation running now is the highest resolution we've ever been able to do for this type of problem.”

Having a bigger and faster Advanced Technology system (ATS) in El Capitan will mean that physicists, who have traditionally used large ensembles of 1D and 2D calculations to form surrogate models, will now be able to create surrogates from large ensembles of 2D and 3D calculations, expanding the design space and simulating physics to a degree they haven’t been able to before, researchers said.

“If a machine comes along that allows you to do 2.5 times better resolution for basically the same cost, then you can get a lot more science done out of that same amount of resources. It allows physicists to do a better job at what they're trying to do, and sometimes it opens doors that were not previously possible,” Skinner explained.

Going beyond 2D, El Capitan also will shift the idea of regularly running 3D multi-physics simulations from a “pipe dream” to reality, researchers said. When the exascale era arrives at LLNL, researchers will be able to model physics with a level of detail and realism not possible before, unlocking new pathways to scientific discovery.

“As we really get into exascale, it's not inconceivable anymore that we could start doing massive ensembles of 3D models,” Skinner said. “The physics really do change as you increase the dimensionality of the models. There are physical phenomena, especially in turbulent flows, that just can't be properly modeled in a lower-dimensional simulation; that really does require that three-dimensional aspect.”

In addition to MARBL, Skinner, Stitt and computational physicist/project lead Rob Rieben recently used 80 of the AMD MI250X GPUs on rzVernal to run a radiation-hydrodynamics simulation that modeled a high-energy density experiment done at the Omega Laser Facility. The researchers were impressed to discover the code, which was developed for Sierra, ran well on the EAS machine without any additional changes. LLNL codes largely rely on the RAJA Portability Suite to attain performance portability across diverse GPU- and CPU-based systems, a strategy that has given them confidence in the portability of the codes for El Capitan.

With a background in astrophysics, star-forming clouds and supernovae, Skinner added that he’s looking forward to using El Capitan, whose processors are designed to integrate with artificial intelligence (AI) and machine learning-assisted data analysis, to combine AI with simulation — a process LLNL has dubbed “cognitive simulation.”

The technique could create more accurate and more predictive surrogate models for complex multi-physics problems such as inertial confinement fusion (ICF) at the National Ignition Facility, which in 2021 set a record for a fusion yield in an experiment and brought the world to the threshold of ignition. In short, Skinner said, physicists will get better answers to their questions, and potentially— in the case of ICF science — save millions of dollars on fusion target fabrication.

“What really makes me smile is getting the computer to act like something that can't easily be experimented on in a laboratory, and the more computing power you can throw at it, the more realistically it behaves,” Skinner said. “I'm really excited about the doors this is going to open up, and the new approaches to scientific discovery that are starting to be explored and enabled by machines like El Capitan that we couldn't even envision doing before. We're entering a time where we don't have to limit ourselves anymore.”

Ryujin, who said he is seeing similar doubling, or greater, node-to-node performance boost over Sierra with the Ares code on rzVernal and Tenaya, said El Capitan will allow scientists to get rapid turnaround on their modeling and simulation jobs. It will enable scientists to address problems that take massive amounts of resources and run orders of magnitude more simulations at once without interfering with other jobs, opening up new possibilities for uncertainty quantification, parameter studies, design exploration and evaluations of models across large sets of experiments, he added.

“The sheer size of the machine is going to be something to look forward to, both for throughput and the ability to do massive problems,” Ryujin said. “Each generation of nodes is getting significantly more powerful and more capable than the previous generation, so we'll be able to do the same calculations that we used to do on far fewer resources. El Capitan is going to be a gigantic machine, and so these simulation jobs that were really huge before, are going to require just a small percentage of the machine.”

Ryujin said scientists are eagerly calculating how large their computing runs will get on El Capitan and is excited at the prospects of researchers at the National Nuclear Security Administration's three national security laboratories being able to model phenomena at resolutions they never could before.

“One of the joys of working in this space is getting to use these huge machines and cutting-edge technology, so I think it's also just very cool to be able to get to build and develop and run on these really advanced architectures,” Ryujin said. “I’m looking forward to running record-setting calculations; I really like getting the feedback from our physicists that we ran the biggest calculation ever because every time we do that, we learn something, it spawns something new in the program and sparks new directions of inquiry.”

Bailey, who oversees the code development teams for El Capitan, said her goal is to develop a useful computational capability for the machine’s future users.

“The best part of my job is hearing when they use our entire High Performance Computing capability, both the machines and the codes, to solve a complex problem or learn something new about the underlying physics of the systems they are modeling,” she explained. “We are working hard now so that our users will be able to make significant breakthroughs using El Capitan."

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

El CapitanTags

ASCHPC, Simulation, and Data Science

Computing

Engineering

Featured Articles