COVID-19 HPC Consortium reflects on past year

(Download Image)

(Download Image)

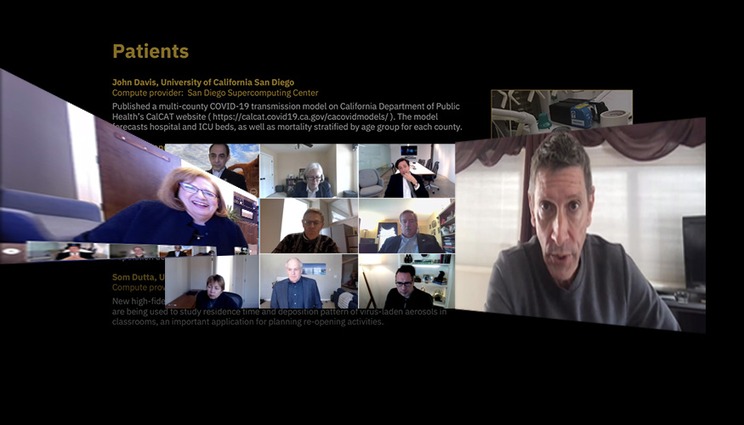

Lawrence Livermore National Laboratory’s Deputy Director for Computing Jim Brase, right, a member of the COVID-19 HPC Consortium’s Science and Computing Executive committee, presented ongoing research projects for COVID-19 and described how they have accelerated basic science, therapeutics development and patient care during the consortium’s one-year anniversary webinar on March 23. LLNL’s Deputy Director for Science and Technology Pat Falcone, left, a panelist for the consortium’s event, discussed ways in which the Department of Energy national laboratories have historically used computing to aid decision-makers during crises.

COVID-19 HPC Consortium scientists and stakeholders met virtually on March 23 to mark the consortium’s one-year anniversary, discussing the progress of research projects and the need to pursue a broader organization to mobilize supercomputing access for future crises.

The White House announced the launch of the public-private consortium, which provides COVID-19 researchers with free access to Department of Energy and industry supercomputers, on March 22, 2020. The consortium now numbers 43 members, contributing 600 petaflops of computing capacity to scientists in 17 countries.

“This public-private initiative has given researchers around the globe unprecedented access to the world’s most powerful computing resources, which they wouldn’t have had otherwise, to tackle the disease and its impact,” said consortium chair Dario Gil, senior vice president and director of IBM Research. “This consortium has helped scientists significantly accelerate the pace of scientific discovery to advance our understanding of the virus…It is proof that it is possible to get private and public organizations — the government, academia and companies — to collaborate for the sake of a common goal.”

Former consortium Academia board member Maria Zuber, vice president of research at the Massachusetts Institute of Technology, called the consortium and the ad hoc effort that emerged in the pandemic’s frenetic early days “pathbreaking” and “a model for rapid response.”

“This consortium is a realization of my long-held belief that when the world needs us, the science and technology community will be there to help,” Zuber said. “With that in mind, we reflect on the lessons we have learned the past year from the COVID-19 Consortium to inform our way forward to plan for a National Strategic Computing Reserve so that we will be prepared to respond whenever future threats and challenges arise.”

The webinar featured speakers and panelists from government, academia and industry, including representatives from Lawrence Livermore National Laboratory (LLNL). LLNL’s Deputy Director for Computing Jim Brase, a member of the consortium’s Science and Computing Executive committee, presented a handful of the most promising ongoing research projects and described how they have accelerated basic science, therapeutics development and patient care for COVID-19.

To date, nearly 100 projects have been approved by the consortium, including work by academia and national laboratories to simulate the SARS-CoV-2 protein structure, model the virus’ mutations and virtually screen molecules for COVID-19 drug design. Molecular/protein binding modeling has identified multiple promising drug compounds, many of which are being further explored in clinical trials, Brase said.

“This is evidence that this kind of approach to responding to an emergency situation like this can have real impact, and it’s on multiple timescales,” Brase said.

Brase said the consortium is “making a real difference” in the area of patient care. A project by former LLNL postdoctoral researcher Amanda Randles at Duke University has used consortium compute time to model airflow through a ventilator to enable splitting of ventilators among different patients. Another project led by Utah State simulating indoor aerosol transport is being used extensively in current reopening plans for schools and other locations.

“High performance computing can really make a difference in some of the practical questions having to do with the pandemic,” Brase said. “We think this is an example that we want to use continuing forward with the National Strategic Computing Reserve and showing the kinds of things that computing can provide when it’s actually made available broadly and in a very timely manner.”

Brase’s talk was followed by a panel on the impact of HPC on crises and the lessons learned from COVID-19 that could improve future emergency response. The panel was moderated by the consortium’s Science and Computing Executive Committee member Barbara Helland, associate director of the DOE Office of Science's Advanced Scientific Computing Research program.

Panelist and LLNL Deputy Director for Science and Technology Pat Falcone said DOE national laboratories have gained “deep knowledge” in applying computing to crises throughout the past several decades, pointing to work at LLNL’s National Atmospheric Release Advisory Center on the Fukushima earthquake and nuclear disaster, and DOE supercomputing’s role in informing decision-makers during events such as the Deepwater Horizon oil spill, Hurricane Sandy and the Ebola virus outbreak.

“Details really matter in a crisis and scientific computation is a key asset to understanding and figuring out details,” said Falcone, who supports establishing a National Strategic Computing Reserve that can regularly practice and improvise when necessary. “I’m pleased to be a part of this community trying to sort through getting us ready for crises in the future.”

In addition to the HPC Consortium, DOE supercomputing has contributed to the fight against COVID-19 through the National Virtual Biotechnology Laboratory (NVBL), which includes all 17 DOE national laboratories, Falcone explained. NVBL research has led to new manufacturing methods for personal protective equipment, COVID-19 testing and diagnostics, novel antibody and therapeutic design and has advanced predictive epidemiological models through artificial intelligence that are currently being used to advise policymakers, Falcone said.

“We’ll be in a better position for future needs because of this ongoing work,” Falcone said.

The panel included consortium board member Manish Parashar of the National Science Foundation; Microsoft’s AI for Good Research Lab Senior Director Geralyn Miller; and Kelvin Droegemeier, former director of the White House Office of Science and Technology Policy and a professor of meteorology at the University of Oklahoma.

Other presenters and speakers included consortium Science and Computing Executive Committee member John Towns of the National Center for Supercomputing Applications, and consortium Membership and Alliances Executive Committee member Mike Rosenfield of IBM.

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

ConsortiumComputing at LLNL

Tags

HPC, Simulation, and Data ScienceHPC

ASC

Earth and Atmospheric Science

Atmospheric, Earth, and Energy

Bioscience and Bioengineering

Biosciences and Biotechnology

Computing

Physical and Life Sciences

HPC Innovation Center

Featured Articles