LLNL-led team awarded Best Paper at SC19 for modeling cancer-causing protein interactions

(Download Image)

(Download Image)

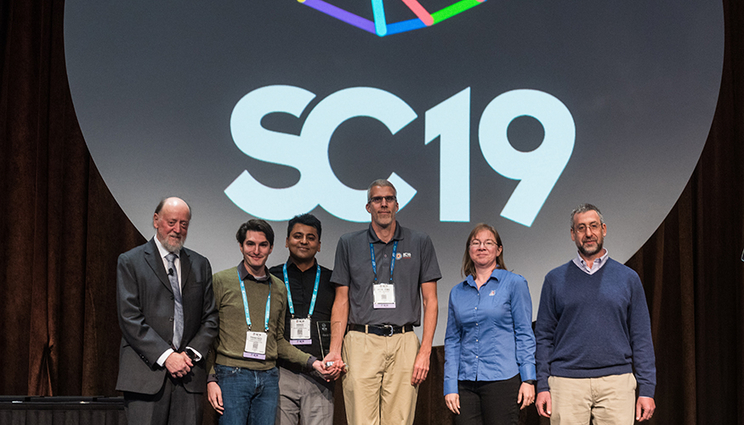

University of Tennessee professor Jack Dongarra (left) and SC19 Papers Chairs Michelle Mills Strout and Scott Pakin (far right) presented Lawrence Livermore National Laboratory computer scientists (l-r) Francesco Di Natale, Harsh Bhatia and Peer-Timo Bremer with the Best Paper award at on Thursday. The paper included more than a dozen co-authors from LLNL as well as contributions from the National Cancer Institute/Frederick National Laboratory for Cancer Research, Los Alamos National Laboratory, Oak Ridge National Laboratory and IBM.

A panel of judges at the International Conference for High Performance Computing, Networking, Storage and Analysis (SC19) on Thursday awarded a multi-institutional team led by Lawrence Livermore National Laboratory computer scientists with the conference’s Best Paper award.

The paper, entitled “Massively Parallel Infrastructure for Adaptive Multiscale Simulations: Modeling RAS Initiation Pathway for Cancer,” describes the workflow driving a first-of-its-kind multiscale simulation on predictively modeling the dynamics of RAS proteins — a family of proteins whose mutations are linked to more than 30 percent of all human cancers — and their interactions with lipids, the organic compounds that help make up cell membranes. Developed as part of the Pilot 2 project in the Joint Design of Advanced Computing for Cancer program, a collaboration between the Department of Energy (DOE) and National Cancer Institute (NCI), the research resulted in a Multiscale Machine-Learned Modeling Infrastructure (MuMMI) that investigators found was scalable to next-generation heterogeneous supercomputers such as LLNL’s Sierra and Oak Ridge’s Summit.

Working for more than two years on the pilot project, which is funded by the National Nuclear Security Administration’s Advanced Simulation and Computing program, the multidisciplinary team, composed of more than 20 computational scientists, biophysicists, chemists and statisticians from LLNL, Los Alamos National Laboratory, NCI/Frederick National Laboratory for Cancer Research, Oak Ridge National Laboratory (ORNL) and IBM, ran nearly 120,000 simulations on Sierra, using 5.6 million GPU hours of compute time and generating a massive 320 terabytes of data.

“I can’t begin to describe how happy I am for our team — it’s been a lot of hard work, and to have it recognized at this level is just amazing,” said Francesco Di Natale, LLNL computer scientist and the paper’s lead author. “This whole team worked extraordinarily hard to make sure the data that we were getting out actually made sense. The ability to go through that data was a computational challenge in itself.”

“The panel could have chosen a lot of other awesome papers on solvers and other technologies, and to have this paper recognized this year as the top entry really speaks to everyone who worked on it,” added LLNL computer scientist and co-author Peer-Timo Bremer. “They also recognize that it’s a new wave of computing — complex workflows are going to become even more important. This was the largest machine at the largest scale for the largest problem. I think that all came into play, and the fact that it’s a high-impact application doesn’t hurt.”

Researchers reported that MuMMi is capable of simulating the interaction between RAS proteins and eight kinds of lipids to investigate RAS dynamics on a macroscale, as well as on a molecular level. While the multi-scale approach wasn’t novel, the researchers said, using a machine learning algorithm to determine which lipid “patches” are interesting enough to more closely examine with the micromodel has never been done before, saving researchers’ compute time and money and providing major advantages over randomized patch selection, including wider coverage of the phase space of the RAS lipid neighborhoods.

The macro-to-micromodel simulations allowed researchers to see how 300 different RAS proteins interacted on a cell membrane, generating data that can be tested experimentally at FNLCR to ensure the models are representative of actual biological results. The models will help Frederick and the NCI to carry out experiments, test predictions and generate more data that will feed back into the machine learning model, creating a validation loop that will produce a more accurate model, researchers said.

“It absolutely required a multidisciplinary effort,” said Sara Kokkila-Schumacher, an IBM researcher who worked on the project while a postdoc at LLNL through the Center of Excellence. “It was a challenging project and the set of tools we had to build really required expertise in a wide variety of areas, including tool development, understanding the biology and getting insight and actual parameters from experimental teams.”

The team has secured funding for the fourth year of the project which will continue on Lassen, Sierra’s unclassified companion system and the 10th fastest supercomputing system in the world. After various improvements, the next phase will also be run on the world’s most powerful supercomputer, Summit at ORNL.

The paper included 13 other co-authors from LLNL, including the DOE lead for the pilot project Chief Computational Scientist Fred Streitz, currently with DOE’s Artificial Intelligence and Technology Office, along with Harsh Bhatia, Tim Carpenter, Gautham Dharuman, Jim Glosli, Helgi Ingolfsson, Felice Lightstone, Tomas Oppelstrup, Tom Scogland, Shiv Sundram, Michael Surh, Yue Yang and Xiaohua Zhang.

Co-authors from other institutions were FNLCR’s Dwight Nissley, who leads the project for the NCI; Sandrasegaram Gnanakaran and Chris Neale from LANL; Claudia Misale, Lars Schneidenbach, Carlos Costa, Changhoan Kim and Bruce D’Amora of IBM’s Thomas J. Watson Research Center; and Liam Stanton of San Jose State University.

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

SC19Lab leads effort to model proteins tied to cancer

Joint Design of Advanced Computing Solutions for Cancer (JDACS4C)

Multi-institutional meeting centers on making the 'impossible' possible in cancer research

Science & Technology Review

Tags

HPC, Simulation, and Data ScienceComputing

Featured Articles