LAB REPORT

Science and Technology Making Headlines

March 22, 2024

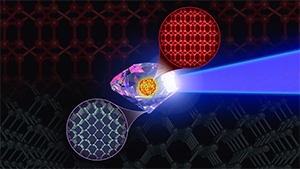

Supercomputer simulations predicting the synthesis pathways for the elusive BC8 “super-diamond,” involving shock compressions of diamond precursor, inspire ongoing Discovery Science experiments at NIF. Image by Mark Meamber/LLNL.

Squeezing diamonds

Diamonds are the hardest naturally occurring material on Earth, but a supercomputer just modeled stuff that’s even harder. Called a “super-diamond,” the theoretical material could exist beyond our planet—and maybe, one day, be created here on Earth.

Like normal diamonds, super-diamonds are made from carbon atoms. This specific phase of carbon, composed of eight atoms, should be stable at ambient conditions. In other words, it could exist in an Earth laboratory.

The specific phase, called BC8, is a high-pressure phase typically found in silicon and germanium. And as the new model suggests, carbon also can exist in this particular phase if squeezed under enormous pressures, according to Lawrence Livermore researchers and collaborators.

Frontier — the fastest and first exascale supercomputer — modeled the evolution of billions of carbon atoms put under immense pressures. The supercomputer predicted that BC8 carbon is 30% more resistant to compression than plain ol’ diamonds.

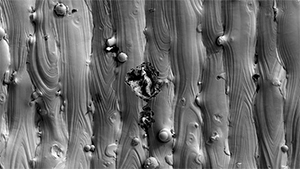

A photo taken by a scanning electron microscope shows a pit at the surface of an additively manufactured (3D-printed) stainless steel part. Image by Thomas Voisin/LLNL.

Slags are corrosive

Like a hidden enemy, pitting corrosion attacks metal surfaces, making it difficult to detect and control. This type of corrosion, primarily caused by prolonged contact with seawater in nature, is especially problematic for naval vessels.

In recent research, Lawrence Livermore National Laboratory (LLNL) scientists delved into the mysterious world of pitting corrosion in additively manufactured (3D-printed) stainless steel 316L in seawater.

Stainless steel 316L is a popular choice for marine applications due to its excellent combination of mechanical strength and corrosion resistance. This holds even more true after 3D printing, but even this resilient material isn't immune to the scourge of pitting corrosion.

The LLNL team discovered the key players in this corrosion drama are tiny particles called "slags," which are produced by deoxidizers such as manganese and silicon. In traditional stainless steel 316L manufacturing, these elements are typically added prior to casting to bind with oxygen and form a solid phase in the molten liquid metal that can be easily removed post-manufacturing.

Researchers found these slags also form during laser powder bed fusion (LPBF) 3D printing but remain at the metal's surface and initiate pitting corrosion.

LLNL researchers helped develop the innovative gamma-ray and neutron spectrometer that will be mounted on the Martian Moons eXploration (MMX) mission spacecraft. Image courtesy of NASA.

Lab instrument to head to Mars’ moons

NASA completed the delivery of its innovative gamma-ray and neutron spectrometer to the Japan Aerospace Exploration Agency (JAXA) for its incorporation into the Martian Moons eXploration (MMX) mission spacecraft, marking a significant milestone in preparation for the mission's final system-level testing.

Developed by the Johns Hopkins Applied Physics Laboratory in collaboration with the Lawrence Livermore National Laboratory, the Mars-moon Exploration with Gamma Ray and Neutrons (MEGANE) instrument is set to play a pivotal role in the MMX mission. This mission is focused on analyzing the composition and origins of Mars’ moons, Phobos and Deimos, and aims to return a sample from Phobos back to Earth.

The research initiative seeks to determine whether these moons are the remnants of a significant collision between Mars and another large body or if they were asteroids captured by Mars' gravitational pull. Through the detection of neutrons and gamma rays emitted from Phobos’ surface, MEGANE will unveil the moon's elemental composition, providing insights into its likely genesis.

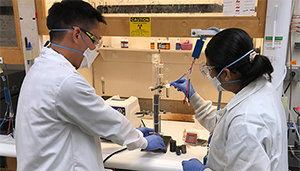

Simon Pang (left) and Buddhinie Jayathilake assemble and prepare a prototype bubble column electrobioreactor to test additively manufactured three-dimensional electrodes. Under their project, excess renewable electricity from wind and solar sources would be stored in chemical bonds as renewable natural gas. Photo by Nathan Ellebracht/LLNL.

Renewable natural gas on the horizon

Lawrence Livermore is collaborating with Southern California Gas Company (SoCalGas) and Electrochaea on an innovative research project that aims to develop a single-stage electro-bioreactor to transform excess renewable electricity and biogas into carbon-neutral synthetic biomethane, also known as renewable natural gas (RNG).

This approach could mark a significant advancement in power to gas technology and underscores the viability of potential for synthetic biomethane to help decarbonize natural gas infrastructure and its end uses from residential heating to manufacturing industries and transportation. SoCalGas has contributed to the project’s technical development and helped provide funding, which also was supported by a $1 million grant from the Department of Energy.

If developed at scale, this technology could increase the yield of RNG produced from carbon dioxide sources like anaerobic digesters, landfills, dairies, fermentation facilities or industrial processes. The hybrid bioreactor and electrolyzer system harnesses the power of Electrochaea’s proprietary microbial biocatalyst, which consumes hydrogen and carbon dioxide, transforming these inputs into RNG.

“We believe this technology will help enable decarbonization of the natural gas grid infrastructure by providing a renewable source of natural gas,” said Simon Pang, a materials scientist in LLNL’s Materials Science Division who heads the project.

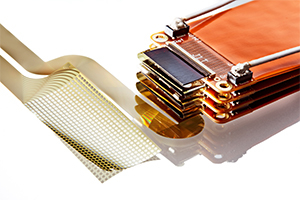

Under a three-year collaboration, LLNL scientists and engineers will work with Precision Neuroscience to develop future versions of the company’s flexible, thin-film neural implant for patients with a variety of neurological disorders. Photo by Randy Wong/LLNL.

The brains behind neural implants

Lawrence Livermore National Laboratory (LLNL) is joining forces with New York-based Precision Neuroscience Corp. to advance the technology of neural implants for patients suffering from a variety of disorders, including stroke, spinal cord injury and neurodegenerative diseases such as amyotrophic lateral sclerosis (ALS).

Under a three-year research and development agreement, LLNL will work with Precision to develop future versions of the company’s neural implant, a thin-film microelectrode array called the Layer 7 Cortical Interface.

The brain implant is designed to allow users to operate computer systems entirely through thought, which could benefit patients who have lost motor coordination or the ability to speak.

The project will leverage LLNL’s background in developing flexible, thin-film multielectrode neural implants, as well as its micro-fabrication facility and regulatory-compliant quality management system for prototype medical device manufacturing.