LAB REPORT

Science and Technology Making Headlines

April 12, 2024

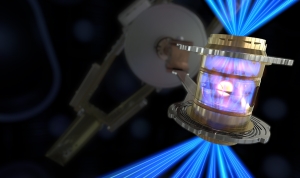

To create fusion ignition, the National Ignition Facility’s laser energy is converted into X-rays inside the hohlraum, which then compress a fuel capsule until it implodes, creating a high temperature, high pressure plasma.

Fusion is here and now

Physicists may remember Dec. 5, 2022, as the day nuclear fusion got its legs and walked for the first time. That’s when Lawrence Livermore’s National Ignition Facility (NIF) produced more energy than it used to drive a fusion reactor — the first time in more than 50 years of research. Since then, it has happened four more times.

Many energy experts consider nuclear fusion the North Star — a way to generate cleaner and more affordable electricity than fossil fuels without creating long-term nuclear waste. Right now, major corporations, including Microsoft, Chevron and Shell, are sending billions of dollars into the field. While success is in sight, other specialists caution against creating too much hype.

“Although there are enormous science and engineering challenges still to overcome, the breakthroughs and the progress have been steady,” said Tammy Ma, lead scientist for the Inertial Fusion Energy Initiative at Lawrence Livermore National Laboratory, during the United States Energy Association’s virtual press briefing.

NIF produced more energy than it put in, which “means the plasma is feeding back on itself and generating more energy. So we are fundamentally in a different place than we were before,” she said. “We recently repeated ignition four more times since December of 2022, and our most recent shot was more than twice as much energy out as went in. That gives you a sense of the progress we're making. But to be clear, there's still much more to do.”

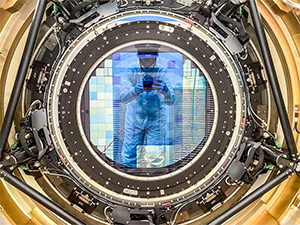

The LSST Camera team successfully installed the cryostat to the camera body on 8 April 2022 – Photo by Travis Lange/SLAC National Accelerator Laboratory.

Eye spy the universe

Work on the most powerful camera ever built has been completed.

The 3,200-megapixel Legacy Survey of Space and Time camera (LSST) is the size of a small car and weighs around 3,000 kilograms. The camera will soon be installed into the newly-completed Vera C Rubin Observatory in Chile, and over the next 10 years will start to build an amazingly detailed image of the Southern Hemisphere sky.

Also involved with the construction of the camera were Brookhaven National Laboratory, which built the camera's digital sensor array, Lawrence Livermore National Laboratory, which designed and built lenses for the camera and the National Institute of Nuclear and Particle Physics at the National Center for Scientific Research in France.

“With the completion of the unique LSST Camera at SLAC and its imminent integration with the rest of Rubin Observatory systems in Chile, we will soon start producing the greatest movie of all time and the most informative map of the night sky ever assembled,” said Director of Rubin Observatory Construction and University of Washington professor Željko Ivezić.

Europium, a rare earth element that has the same relative hardness of lead, is critical to the clean energy economy. Image by LLNL.

It’s critically important

As part of President Biden’s Investing in America agenda, the Department of Energy’s Office of Fossil Energy and Carbon Management have awarded $75 million for a project to develop a Critical Minerals Supply Chain Research Facility.

The project, funded by the Bipartisan Infrastructure Law, will strengthen domestic supply chains, help to meet the growing demand for critical minerals and materials and reduce reliance on unreliable foreign sources. The project also supports President Biden’s Executive Order 14017, which has made it a policy of the United States to have resilient, diverse and secure critical mineral and material supply chains. These supply chains are central for U.S. energy security, economic prosperity and national security, as they underpin many clean energy technologies, vital manufacturing processes and several key defense applications.

The project selected to support the development of a Critical Materials Supply Chain Research Facility will establish a nationwide foundational capability to address critical minerals and materials supply chain challenges. The National Energy Technology Laboratory will lead the Minerals to Materials Supply Chain Facility (METALLIC) project, which includes participation from eight other DOE national laboratories — Ames National Laboratory, Argonne National Laboratory, Idaho National Laboratory, Lawrence Berkeley National Laboratory, Lawrence Livermore National Laboratory, National Renewable Energy Laboratory, Oak Ridge National Laboratory and Pacific Northwest National Laboratory.

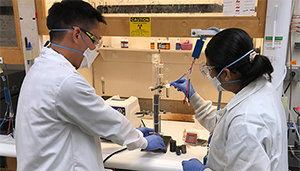

Simon Pang (left) and Buddhinie Jayathilake assemble and prepare a prototype bubble column electrobioreactor to test additively manufactured three-dimensional electrodes. Under their project, excess renewable electricity from wind and solar sources would be stored in chemical bonds as renewable natural gas. Photo by Nathan Ellebracht/LLNL.

Energy storage hits the gas

Lawrence Livermore National Laboratory (LLNL), Southern California Gas Company and the technology firm Electrochaea have partnered on a plan to add grid-scale storage in the form of synthetic natural gas.

The idea is to create a bioreactor that would transform excess solar- and wind-generated electricity and biogas — methane generated from waste products — into renewable natural gas (RNG).

While spikes in power usage have led to power outages in recent summers, instances of too much renewable power also have strained the power grid. Such scenarios force grid operators to curtail renewable generation to avoid damage to the grid.

More than 1.5 million megawatt hours of renewable electricity were curtailed in California in 2020, effectively throwing away enough energy to power 100,000 homes for a year because the grid lacks suitable storage mechanisms to hold onto surplus energy for later use, according to LLNL.

Simon Pang, a materials scientist at LLNL’s Materials Science Division, will head the two-year, $2 million project. He said the hope is to “enable decarbonization of the natural gas grid.”

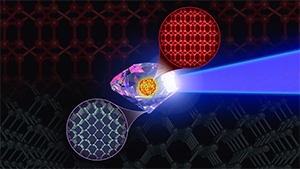

Supercomputer simulations predicting the synthesis pathways for the elusive BC8 “super-diamond,” involving shock compressions of diamond precursor, inspire ongoing Discovery Science experiments at NIF. Image by Mark Meamber/LLNL.

Super diamond is one tough nut

Scientists have simulated an elusive, superstrong form of carbon, dubbed “super-diamond,” that may be tougher than diamonds, the hardest known material. But observing the real thing might require a trip far outside our solar system, to the center of an exoplanet — a feat that's not likely anytime soon, or possibly ever.

BC8, as the superstrong carbon is known, is an eight-atom crystal that would be 30% more resistant to compression than diamonds, according to a new study by Lawrence Livermore and collaborators. Scientists have been trying to synthesize this crystal in the lab, without success. The new simulation reveals that the material can be made only in a narrow range of pressures and temperatures, which might make that synthesis possible in the future, researchers said.

The research also helps reveal what might be at the hearts of carbon-rich exoplanets, which are predicted to have just the right conditions for the formation of BC8.

Armed with their new knowledge of BC8’s formation pathways and stability, the researchers are making new attempts to synthesize the material at LLNL's National Ignition Facility. These types of methods involve shocking diamonds twice at upward of 45,000 mph and then compressing them under enormous pressures.