Using models, 3D printing to study common heart defect

(Download Image)

(Download Image)

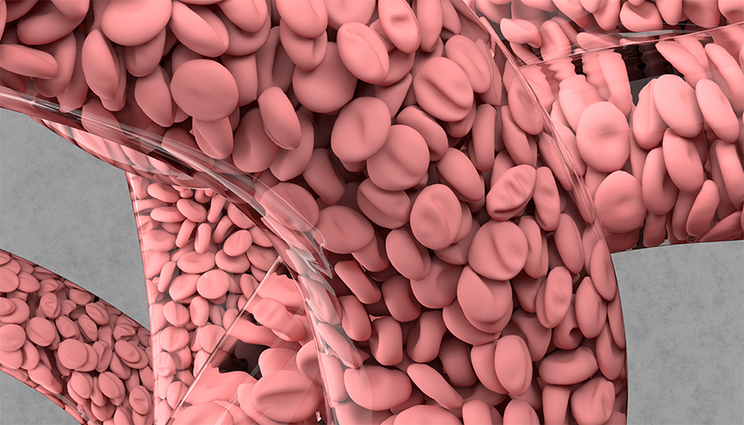

Lawrence Livermore researchers and collaborators have combined machine learning, 3D printing and high performance computing simulations to accurately model blood flow in the aorta. Shown is a simulation of arterial blood flow using HARVEY, a fluid dynamics software developed by Lawrence Fellow Amanda Randles. Visualization by Liam Krauss/LLNL.

One of the most common congenital heart defects, coarctation of the aorta (CoA) is a narrowing of the main artery transporting blood from the heart to the rest of the body. It affects more than 1,600 newborns each year in the United States, and can lead to health issues such as hypertension, premature coronary artery disease, aneurysms, stroke and cardiac failure.

To better understand risk factors for people with CoA, a large team of researchers, including a former Lawrence Fellow and her mentor at Lawrence Livermore National Laboratory (LLNL), have combined machine learning, 3D printing and high performance computing simulations to accurately model blood flow in the aorta. Using the models, validated on 3D-printed vasculature, the team was able to predict the impact of physiological factors such as exertion, elevation and even pregnancy on CoA, which forces the heart to pump harder to get blood to the body. The work was published in the journal Scientific Reports.

Proposed as an Institutional Computing Grand Challenge project at LLNL by then-Lawrence Fellow Amanda Randles (now the Mordecai assistant professor of biomedical sciences at Duke University) and her mentor, LLNL computer scientist Erik Draeger, the work represents the largest simulation study to date of CoA, involving more than 70 million compute hours of 3D simulations done on LLNL’s Blue Gene/Q Vulcan supercomputer.

“You can take these simulations and really understand the realistic range of effects on people with this condition, beyond the factors present when the patient is sitting at rest in a doctor’s office,” Draeger said. “It also describes a protocol where, although you still need to do simulations, you don’t need to do all the configurations there are. One of the things that’s really interesting about this type of study is that, until you can do this level of simulation, you have to go by average results. Whereas with this, you can take an image of the aorta of that specific person and model the stress on the aortic walls.”

On Vulcan, Draeger, Randles and their team ran simulations of the aorta with stenosis — a narrowing in the left side of the heart that creates a pressure gradient through the aorta and on to the rest of the body. The simulations used a fluid dynamics software called HARVEY, developed by Randles to model blood flow, run on 3D geometries of the aorta derived from computed tomography and MRI scans. Because the aorta is so large and has a very chaotic flow, Randles — who has a background in biomedical simulation and HPC — rewrote the HARVEY code to maximize it for Vulcan so the team could run the enormous amount of simulations necessary to accurately model it.

The researchers then investigated the effects of varying the degree of stenosis, blood flow rate and viscosity, using the models to predict two diagnostic metrics — pressure gradient across the stenosis and wall shear stress on the aorta — to reflect the real-world impact of a person’s lifestyle choices on CoA.

“We were looking at how different physiological characteristics can change the flow profile,” Randles said. “If the person is running, if they’re running at altitude, if they’re pregnant — how would that change things like the pressure gradient across the narrowing of the vessel? That can influence when doctors are going to take action. You can’t capture the full state of that patient in just one simulation.”

Randles said the simulations indicated a synergy of viscosity and velocity of the blood at different points of the aorta, which also was influenced by the specific geometry of a particular patient. The relationships among the various physiological factors weren’t intuitive or linear, she added, requiring a large supercomputer like Vulcan combined with machine learning to fully understand the complex interplay among them.

To create a framework for building a predictive model with a minimal amount of simulations necessary to capture all the physiological factors, the team implemented machine learning models trained on data gathered from all 136 blood flow simulations performed on Vulcan. Machine learning enabled the team to reduce the number of viscosity/velocity pairing simulations needed from hundreds down to nine, making it feasible to someday develop patient-specific risk profiles, Randles said.

“The ideal is that in the future, when a new patient comes in you wouldn’t have to run 70 million compute hours, you would only have to do enough to get those few simulations,” Randles said. “It’s the first step to not requiring a supercomputer in the hospital. We want to be able to give enough training data and a machine learning framework they can employ to do just a few simulations that maybe would fit on a local cluster or something much more accessible, while also leveraging results from the large-scale supercomputing.”

To validate the models, researchers at Arizona State University 3D-printed aortas and completed benchtop experiments to simulate blood flow for comparison with the simulation results. 3D printing allowed the team to generate profiles of the aorta and extract data on wall sheer stress, velocity and other factors important to understanding flow, Randles said.

Researchers said the combination of machine learning and experimental design could have a broad impact on the computational community and would be useful for any large study interested in ensuring the best use of resources. And for clinicians, it could provide new insights into certain risk factors to monitor, as well as inform future clinical studies.

The team wants to apply the new framework to other diseases like coronary artery disease and follow up on the CoA work to better understand why certain physiological factors are more crucial to determining health risk. While the ultimate goal is to see the models used in a clinical environment, a more comprehensive study on the impacts of certain factors on CoA will need to be done, researchers said. Further work will require partnerships with clinicians and more datasets from patients with known outcomes, according to Draeger.

For now, predictions based on medical imaging and simulation still require a great deal of time and effort to generate an actionable result, Draeger said. But as researchers perform more studies, it is likely that such neural networks and models can be refined so that fewer simulations will be needed to make predictions that clinicians can have confidence in.

Draeger said by leveraging its expertise in physics, simulation, applied math and machine learning, as well as its access to supercomputers, LLNL is in a strong position to partner with biologists to impact medicine and health in the future through high performance computing modeling and simulation.

“We’re just now getting to the point that high performance computing and simulation is at enough fidelity and speed that you can actually cross over directly with clinical medicine. Draeger said. “We’ve been getting closer and closer but invariably, simulations are too slow. But we’re now at a point where it’s not impractical, especially with machine learning to cut down on the costs, to imagine that you could actually do a simulation study of a specific person and use it to impact their care in the not-too-distant future.”

Funding for the work at LLNL was provided by the Laboratory Directed Research and Development (LDRD) program and the Lab’s Institutional Computing Grand Challenge program. Further grant money for the study was made available by the National Institutes of Health.

Co-authors included researchers from Duke University, Brigham and Women’s Hospital at Harvard Medical School, Arizona State University, the Dana-Farber Cancer Institute, the Harvard T.H. Chan School of Public Health, Harvard University and the Broad Institute of Harvard and MIT.

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

Scientific ReportsDuke University

Tags

HPC, Simulation, and Data ScienceComputing

ASC

Featured Articles