Researchers developing deep learning system to advance nuclear nonproliferation analysis

(Download Image)

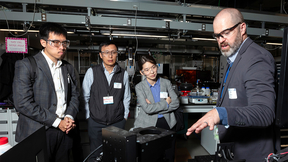

Lawrence Livermore National Laboratory computer scientist Barry Chen is heading a project to develop deep learning and high-performance computing algorithms that can sift through massive amounts of data for evidence of nuclear proliferation activities to accelerate nonproliferation analysis at a scale beyond what humans could possibly handle manually. Photo by Randy Wong/TID

(Download Image)

Lawrence Livermore National Laboratory computer scientist Barry Chen is heading a project to develop deep learning and high-performance computing algorithms that can sift through massive amounts of data for evidence of nuclear proliferation activities to accelerate nonproliferation analysis at a scale beyond what humans could possibly handle manually. Photo by Randy Wong/TID

Artificial neural networks are all around us, deeply embedded in routine functions on the internet. They help online merchants make personalized shopping recommendations, enable social media sites to recognize faces in photos and assist email programs in filtering out spam. Neural networks also have the potential to play a critical role in national security, helping nonproliferation analysts prevent rogue states or nefarious actors from building a nuclear weapon.

To accelerate the pace of nonproliferation analysis and scale it to much larger data sets than humans could possibly handle manually, researchers at Lawrence Livermore National Laboratory (LLNL) are developing new deep learning and high-performance computing algorithms that can sift through massive amounts of data for evidence of nuclear proliferation activities. The backbone of the project is LLNL’s deep learning framework, dubbed the "Semantic Wheel." This framework uses neural networks to "map" multimodal data (i.e., images, text and video) into a feature space, where their relative proximity to each other is determined by the closeness of their connotative meaning, akin to a Dewey Decimal System for multimodal data.

"No one person can look at all these data," said LLNL computer scientist Barry Chen, the project’s principal investigator. "But if we can teach computers to filter out the chaff, our analysts might be able to zero in on key indicators faster and more thoroughly. What our deep learning system could do is categorize each piece of data -- be it images, video, or potentially any type of input -- based on how it relates to the processes to build a weapon. This way, the system does the initial level of screening, making it much more manageable for the analyst. Analysts then would look at the data that the system suggests as being related to the weaponization process and be able to evaluate how much evidence there is today that someone could complete those steps."

For example, once fully realized the Semantic Wheel would allow a nonproliferation analyst to find previously unlabeled images and videos related to uranium enrichment using a simple text query for "uranium enrichment." To complete this search, the Semantic Wheel would return data of any type neighboring the text phrase "uranium enrichment" in the feature space. This would help analysts quickly find relevant uranium enrichment images and videos without having to manually look at millions of images or watch hundreds of hours of video.

"With a system like this, analysts will be able to systematically search more data, including untagged images and videos, for evidence of potential proliferation activity," added LLNL’s Yana Feldman, the project’s nonproliferation analysis lead. "It’s giving the analysts a way to connect the dots more efficiently and in ways they haven’t been able to before."

The especially powerful aspect of the Semantic Wheel, researchers explained, is that it can find conceptually related data even when those data lack keyword tags. This is a critical feature considering that most data that exist "in the wild" are untagged. It also addresses a key challenge for machine learning researchers training AI systems: the limited availability of human-annotated data, such as images and video with text describing their contents. State-of-the-art neural networks are starving for human-annotated data, requiring millions of samples for their "supervised" learning algorithms. To tackle this challenge, the LLNL team has developed new "self-supervised" learning algorithms that don’t require humans to perform the time-consuming task of hand-labeling data. This enables researchers to exploit the nearly unlimited amount of unlabeled data for training better neural networks.

Through self-supervised learning, Chen said, the neural network automatically learns what features are important for it to "see" or "read" separately for each data type. The team then trains additional "mapping" neural networks to project image, video and text features of related concepts into proximal locations in the Semantic Wheel feature space -- a hub-and-spokes model where the learning features of each type of data are the spokes and the final feature space is the hub. This last step does require human-annotated data, however researchers have noted that the success of up-front and self-supervised learning of unimodal features drastically reduces the overall amount of data the system requires to learn the mapping networks and retrieve cross-modal data.

"One challenge with these self-supervised learning methods is that they have a voracious appetite for compute cycles because they are able to create their own training labels from the raw data," said LLNL computer scientist Brian Van Essen, the project’s architecture team lead. "To address the computing needs for both image and video ‘spokes,’ we are developing a scalable deep learning framework that is optimized for the Lab’s flagship high-performance computing systems."

Researchers said LLNL’s self-supervised learning approach has demonstrated impressive performance across a broad range of image recognition tasks, besting other unsupervised deep learning systems across the board in accuracy rates. LLNL computer scientist Nathan Mundhenk, the primary creator of the team’s self-supervised system, presented the results at the prestigious 2018 Conference on Computer Vision and Pattern Recognition in June.

Chen said the Semantic Wheel, part of a three-year Laboratory Directed Research and Development (LDRD) Strategic Initiative project, is "pushing the limits" of deep learning and high-performance supercomputing machines, but he and his team are planning to improve the neural networks further by adding more data. Now midway through the project, the approach will serve as a building block for future efforts by the National Nuclear Security Administration (NNSA) to develop and apply data analytics to improve detection of nuclear proliferation activities.

"Our goal is to bring together new data science capabilities from across the Department of Energy’s labs to help address the most difficult challenges in nuclear nonproliferation," said LLNL’s Deputy Associate Director for Data Science Jim Brase. "The Semantic Wheel will be one of our most important tools."

For more information on this and other data science projects at LLNL, visit the Data Science Institute’s homepage.

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

Data Analytics and Decision Sciences: Machine LearningCenter for Advanced Signal and Image Sciences (CASIS)

Data Science Institute

Tags

HPC, Simulation, and Data ScienceComputing

Engineering

Featured Articles