LLNL/LBNL team named as Gordon Bell Award finalists for work on modeling neutron lifespans

(Download Image)

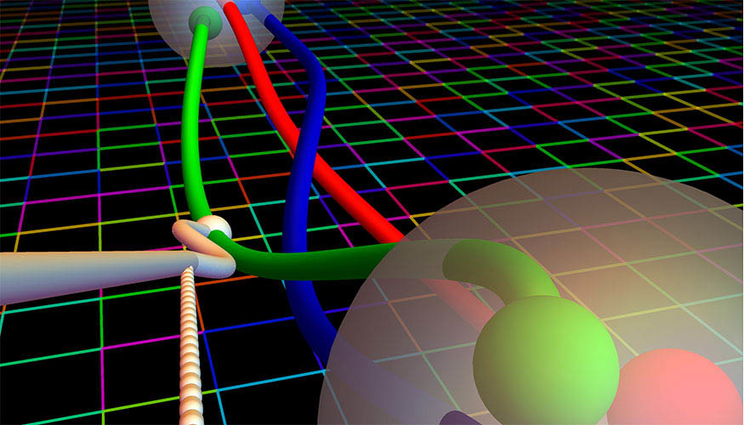

Beta decay, the decay of a neutron (n) to a proton (p) with the emission of an electron (e) and an electron-anti-neutrino (ν). In the figure gA is depicted as the white node on the red line. The square grid indicates the lattice. Image by Evan Berkowitz/Forschungszentrum Jülich/Institut für Kernphysik /Institute for Advanced Simulation

(Download Image)

Beta decay, the decay of a neutron (n) to a proton (p) with the emission of an electron (e) and an electron-anti-neutrino (ν). In the figure gA is depicted as the white node on the red line. The square grid indicates the lattice. Image by Evan Berkowitz/Forschungszentrum Jülich/Institut für Kernphysik /Institute for Advanced Simulation

A team of scientists and physicists headed by the Lawrence Livermore and Lawrence Berkeley national laboratories has been named as one of six finalists for the prestigious 2018 Gordon Bell Award, one of the world’s top honors in supercomputing.

Using the Department of Energy’s newest supercomputers, LLNL’s Sierra and Oak Ridge’s Summit, a team led by computational theoretical physicists Pavlos Vranas of LLNL and André Walker-Loud of LBNL developed an improved algorithm and code that can more precisely determine the lifetime of a neutron, an achievement that could lead to discovering new, previously unknown physics, researchers said.

The team’s approach involves simulating the fundamental theory of quantum chromodynamics (QCD) on a fine grid of space-time points called the lattice. QCD theory describes how particles like quarks and gluons make up protons and neutrons.

The lifetime of a neutron, which begins to decay after about 15 minutes, is important because it has a profound effect on the mass composition of the universe, Vranas explained. Using previous generation supercomputers at ORNL and LLNL, the team was the first to calculate the nucleon axial coupling, a figure (denoted by gA) directly related to the neutron lifetime, at 1 percent precision. Two different real-world experiments have measured neutron lifetime with results that differ at an experimental accuracy of about 0.1 percent, which researchers believe may be related to new physics affecting each experiment.

To resolve this discrepancy, Vranas and his team have advanced their calculation onto the new generation supercomputers Sierra and Summit, aiming to improve their precision to less than 1 percent and get closer to the experimental results. The team has fully optimized their codes on the new CPU (Central Processing Unit)/GPU (Graphics Processing Unit) architectures of the two supercomputers, which involved developing an algorithm that exponentially speeds up calculations, a method for optimally distributing GPU resources and a job manager that allows CPU and GPU jobs to be interleaved.

"New machines like Sierra and Summit are disruptively fast and require the ability to manage and process more tasks, amounting to about a factor of 10 increase. As we move toward exascale, job management is becoming a huge factor for success. With Sierra and Summit, we will be able to run hundreds of thousands of jobs and generate several petabytes of data in a few days — a volume that is too much for the current standard management methods," said LBNL’s Walker-Loud. "The fact that we have an extremely fast GPU code (QUDA) and were able to wrap our entire lattice QCD scientific application with new job managers we wrote (METAQ and MPI_JM) got us to the Gordon Bell finalist stage, I believe."

The resulting axial coupling calculation, Vranas said, will provide the neutron lifetime that the fundamental theory of QCD predicts. Any deviations from the theory may be signs of new physics beyond current understanding of nature and the reach of the Large Hadron Collider.

"We’ve demonstrated that we can use this next generation of computers efficiently, at about 15-20 percent of peak speed," Vranas said. "This research takes us further, and now with these computers we can move forward with precision better than one percent, in an attempt to find new physics. This is an exciting time."

On Sierra and Summit, the team was able to reach sustained performance of about 20 petaFLOPS (FLOPS stands for floating-point operations per second), or roughly 15 percent of the peak performance for Sierra. The team discovered that the number of calculations they could do on the new machines will keep rising in a constant linear fashion, a solid indication that using more of the GPUs in the machines will result in even faster calculations. In turn this will result in producing more data and therefore to improved precision of the calculation of the neutron lifetime, researchers said.

"Every time a new supercomputer comes along it just amazes you," Vranas said. "These systems are significantly different than their predecessors, and it was quite an effort on the code side to make this happen. This is important science and Sierra and Summit will accelerate it in a meaningful and impactful way."

LLNL postdoctoral researcher Arjun Gambhir contributed to the research. Co-authors include Evan Berkowitz (Institute for Advanced Simulation, Jülich Supercomputing Centre), M.A. Clark (NVIDIA), Ken McElvain (LBNL and University of California, Berkeley), Amy Nicholson (University of North Carolina), Enrico Rinaldi (RIKEN-Brookhaven National Laboratory), Chia Cheng Chang (LBNL), Balint Joo (Thomas Jefferson National Accelerator Facility), Thorsten Kurth (NERSC/LBNL) and Kostas Orginos (College of William and Mary).

The Gordon Bell Prize is awarded each year to recognize outstanding achievements in high performance computing, with an emphasis on rewarding innovations in science applications, engineering and large-scale data analytics.

Other finalists include an LBNL-led collaboration using exascale deep learning on Summit to identify extreme weather patterns; a team from ORNL that developed a genomics application on Summit capable of determining the genetic architectures for chronic pain and opioid addiction at up to five orders of magnitude beyond the current state-of-the-art; an ORNL team that used an artificial intelligence system to automatically develop a deep learning network on Summit capable of identifying information from raw electron microscopy data; a team from the University of Tokyo that applied artificial intelligence and trans-precision arithmetic to accelerate simulations of earthquakes in cities; and a team led by China’s Tsinghua University that developed a framework for efficiently utilizing an entire petascale system to process multi-trillion edge graphs in seconds.

The Gordon Bell winner will be announced at the 2018 International Conference for High Performance Computing, Networking, Storage and Analysis (SC18) in Dallas this November.

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

SC18: Gordon Bell Prize Finalist No. 1SC18: Gordon Bell Prize Finalist No. 2

Gordon Bell Awards

"A per-cent-level determination of the nucleon axial coupling from quantum chromodynamics"

Tags

HPC, Simulation, and Data ScienceComputing

Featured Articles