LLNL team achieves largest graph analytics to date

(Download Image)

(Download Image)

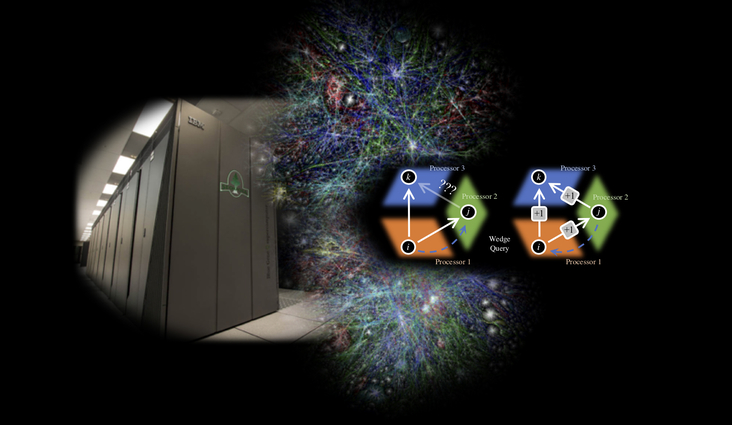

Computer scientists and applied mathematicians searched for 1 quadrillion “triangles” — think three-way connections between friends of friends on a social network — using 1 million processors on LLNL’s IBM BlueGene/Q Sequoia supercomputer. The work is the largest high-performance computing triangle-counting effort to date and earned the team a third consecutive title of “Champions” at the IEEE HPEC Graph Challenge. Photo illustration by Geoff Sanders

Whenever Amazon makes a shopping recommendation based on past purchases, Facebook figures you might know friends of friends or Instagram decides who qualifies as an “influencer,” they’re using tools called graph analytics to determine those multiple threads of connections.

Besides broad usage in the tech industry, graph analytics also have national security applications, where algorithms dig through massive datasets to find anomalies or patterns of nefarious activity. It’s in that vein that a Lawrence Livermore National Laboratory (LLNL) team of computer scientists and applied mathematicians, including Roger Pearce, Geoffrey Sanders, postdoc Benjamin Priest and visiting scholar Trevor Steil, searched for 1 quadrillion “triangles” — relationships such as three-way connections between friends of friends on a social network — using 1 million processors on LLNL’s IBM BlueGene/Q Sequoia supercomputer. The work is the largest high-performance computing (HPC) triangle-counting effort to date and earned the team their third consecutive title of “Champions” at the 2019 IEEE HPEC Graph Challenge, an annual effort by MIT Lincoln Labs, IEEE and Amazon Web Services to develop a new kind of benchmark for analyzing graphs and data derived from social media, sensor feeds and scientific data.

The analysis was accomplished with a triangle enumeration research code based on HavoqGT, an open source research platform developed at LLNL for expressing graph algorithms on HPC systems and derived from Pearce’s Ph.D. thesis work. HavoqGT counts triangle relationships in distributed memory and is one of the data science benchmarks for CORAL-2 (the Collaboration of Oak Ridge, Argonne and Livermore national laboratories), which will bring exascale computing to LLNL in late 2022.

Last year, researchers ran HavoqGT on 1 million cores of Sequoia for the Graph Challenge, and in April, Pearce and his colleagues successfully ran the benchmark on the entire Sequoia machine and its 1.5 million processors. The latest award-winning run required generating more than a trillion “edges,” or connections between the 68 billion graph “vertices” and about an hour of compute time. To accomplish the feat, Sequoia had to check more than a quadrillion (1,000,000,000,000,000) possible locations to determine if a triangle relationship existed.

“It’s the first time we think petascale has actually been used in HPC graph analysis,” said Pearce, who wrote the algorithm. “It’s important because it shows that, using these large supercomputers, we can really crunch data in a way that was previously thought to be very difficult to do, so much that people didn’t try. Counting triangles is an important demonstration, or even a building block, for other analytics, like measuring cohesiveness of a community in a graph, or tracking movements of communities over time.”

In 2018, Pearce and Sanders were named as Graph Challenge Champions for computing the full k-truss decomposition (an analytic that utilizes triangle enumeration) in large, real-world distributed graphs on the LLNL computing system Catalyst. It followed up on previous work Pearce did with HavoqGT on triangle counting that was crowned a 2017 Graph Challenge Champion.

Pearce said the latest record-breaking run shows that Sequoia, which is set to be retired in early 2020, is “one of the better HPC architectures for graph analytics.” In May, researchers ran a customized version of HavoqGT on all of LLNL’s Quartz system, to see how it would run on a traditional Linux cluster. Despite Sequoia’s slower processors and advanced age (in computing terms), it processed the same data 2.3 times faster than Quartz.

Pearce said researchers are working on a graphics processing unit (GPU) version of HavoqGT so they can run it on LLNL’s newest supercomputer Sierra, a heterogenous machine comprised of IBM CPUs and NVIDIA GPUs. If they can overcome the interconnect and communication challenges that come with a GPU-based system, that result could also be submitted for the 2020 Graph Challenge, he said.

“We’re trying to demonstrate a new approach for doing the same computation with less communication,” Pearce said.

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

Graph Challenge ChampionsComputation at LLNL

Tags

HPC, Simulation, and Data ScienceComputing

Featured Articles