LAB REPORT

Science and Technology Making Headlines

March 8, 2024

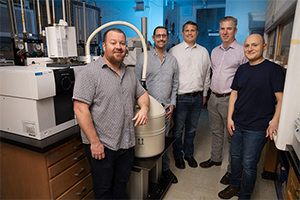

Left to right: Keith Morrison, Jason Moore, Keo Springer, Keith Coffee and Batikan Koroglu stand in front of the cryo-focused pyrolysis GC-MS (gas chromatography mass spectrometer). The team used this instrument to capture and cryo (liquid nitrogen) trap the gases that evolve from high explosives as they thermally decompose. Photo by Blaise Douros/LLNL.

Hot stuff

TATB (1,3,5-triamino-2,4,6-trinitrobenzene) is an important explosive compound because of its extensive use in munitions and worldwide weapons systems. Despite its importance, researchers have been trying to understand its response to temperature extremes for the past 50 years.

A Lawrence Livermore National Laboratory (LLNL) team has uncovered a new thermal decomposition pathway for TATB that has a significant bearing on computational models that predict the energy release and thermal behavior of TATB and possibly other insensitive high explosives (IHEs).

TATB is widely viewed as the most stable IHE, as it is not easily detonated by external stimuli. It does not undergo the thermal sequence of deflagration-to-detonation, which is unique among explosives. It requires a proper detonation chain to initiate, so handling the material is relatively free from accidental initiation if proper safety methods are followed.

One aspect of this safety envelope is how the material responds to temperature extremes; whether this material becomes more sensitive and is no longer safe to handle when subjected to abnormal thermal environments.

“Our goal with this project was to understand the behavior experimentally to construct computational models predicting behavior for any thermal exposure conditions,” said LLNL scientist Keith Morrison.

Hydrogen is one of the smallest elements but may hold a big promise for clean energy. Photo courtesy of USGS.

Hunting for hydrogen underground

U.S. Energy Department Secretary Jennifer Granholm calls clean hydrogen the “‘Swiss Army Knife’ of zero-carbon solutions because it can do just about everything.” But producing hydrogen with current technologies takes a lot of energy and is carbon intensive. Geologic hydrogen could sidestep both obstacles, which could ultimately reduce costs.

Last month was particularly busy on this front: In early February, the Energy Department announced it was investing $20 million into 16 projects related to naturally occurring hydrogen.

While tracking down existing geologic hydrogen resources is a first challenge, the $20 million in Advanced Research Projects Agency-Energy funding is going toward researching how to accelerate and stimulate the natural geochemical process by which it is made. Not all reserves of naturally occurring hydrogen are going to be accessible and at concentrations economically viable to extract, so helping these natural processes along would increase the quantity of hydrogen we can get out of the ground.

Maria Gabriela Davila Ordonez, a research scientist and chemical engineer at Lawrence Livermore National Laboratory, has received Energy Department funds to research how to stimulate hydrogen production where it is not currently happening by injecting specific organic acids underground.

“One of our main impacts for this project will be analyzing or quantifying the change in the physical properties in the rock,” Davila Ordonez said.

Jennifer Pett-Ridge speaks at the Roads to Removal symposium at UC Merced. Photo courtesy of UC Merced.

Fighting climate change, one symposium at a time

Discussions around climate change often center around the bad news — the planet is warming, weather is getting more extreme, resources are increasingly scarce. But there also is cause for hope. There are options to mitigate climate change, and some of them are already happening.

This was the message behind "Roads to Removal," a symposium at UC Merced based on the report by the same name. The report was commissioned by the Department of Energy and produced by Lawrence Livermore National Laboratory (LLNL) in conjunction with scientists from more than a dozen institutions across the nation, including UC Merced. Symposiums around the country will continue throughout the year.

Roughly 175 people from academia, science, agriculture and business attended the symposium, the first of several planned to highlight what can be done in specific areas of the United States to reduce global warming.

The federal government has set a goal to reach net-zero emissions by decarbonizing the economy, removing carbon dioxide from the atmosphere and storing at least a billion tonnes — a metric ton — a year by 2050. "Roads to Removal" is aimed at determining how much carbon dioxide can be removed and at what cost. The UC Merced event focused on soils, cropland and management; direct air capture; geologic storage; and biomass carbon removal and storage.

"We can use technology that we know works today to remove carbon, and in the process create hundreds of thousands of jobs," said Jennifer Pett-Ridge, a senior staff scientist at LLNL and adjunct professor at UC Merced who is the lead author of the report. "We need to weigh how these new approaches to reducing carbon are going to affect our lives."

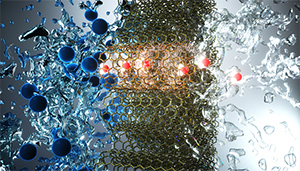

An artist’s view of small-diameter carbon nanotubes that pass through water molecules (red and white) and reject ions (blue). High permselectivity of small-diameter nanotubes can enable advanced water desalination technologies. illustration concept: A. Noy, T. A. Pham, Y. Li, Z. Li, F. Aydin (LLNL). Illustration by Ella Maru Studios.

Looking deep into the smallest pores

Membranes of vertically aligned carbon nanotubes (VaCNT) can be used to clean or desalinate water at high flow rate and low pressure. Recently, researchers of Karlsruhe Institute of Technology (KIT) and Lawrence Livermore carried out steroid hormone adsorption experiments to study the interplay of forces in the small pores. They found that VaCNT of specific pore geometry and pore surface structure are suited for use as highly selective membranes.

Clean drinking water is of vital importance to all people worldwide. Membranes are used to efficiently remove micropollutants, such as steroid hormones that are harmful to health and the environment. A very promising membrane material is made of (VaCNT)s.

In experiments with steroid micropollutants, IAMT researchers studied why VaCNT membranes are perfect water filters. They used membranes produced by the Lawrence Livermore National Laboratory (LLNL). The membranes were developed by Francesco Fornasiero and his team at LLNL. The experiments with the micropollutants were carried out and evaluated using latest analytical instruments at IAMT

The study was the first to focus on the interplay of hydrodynamic forces, friction and forces of attraction and repulsion. It provides basic findings with respect to water processing. These may benefit ultra- and nanofiltration processes controlled by nanopores.