HPCwire awards Lab scientists at SC17

(Download Image)

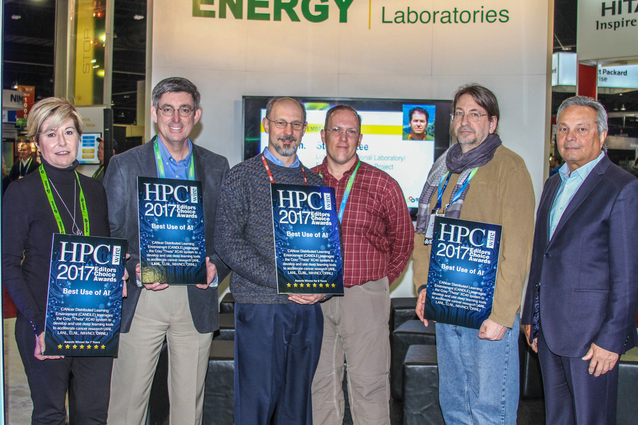

CANDLE researchers (from left): Georgia Tourassi, director of the Health Data Sciences Institute at Oak Ridge National Laboratory; John Sarrao, associate lab director for Theory, Simulation and Computation at Los Alamos National Laboratory; Lawrence Livermore National Laboratory Chief Computational Scientist Fred Streitz, LLNL computer scientist Brian Van Essen; Argonne National Laboratory Associate Lab Director for Computing Rick Stevens; and Tabor Communications CEO Tom Tabor.

(Download Image)

CANDLE researchers (from left): Georgia Tourassi, director of the Health Data Sciences Institute at Oak Ridge National Laboratory; John Sarrao, associate lab director for Theory, Simulation and Computation at Los Alamos National Laboratory; Lawrence Livermore National Laboratory Chief Computational Scientist Fred Streitz, LLNL computer scientist Brian Van Essen; Argonne National Laboratory Associate Lab Director for Computing Rick Stevens; and Tabor Communications CEO Tom Tabor.

Lawrence Livermore National Laboratory (LLNL) researchers won big at the 2017 SuperComputing Conference (SC17) in Denver, taking home two HPCwire Editor’s Choice awards for their work in applying high-performance computing (HPC) to solve complex challenges.

The publication honored Lab scientists involved in a partnership between the Department of Energy (DOE) national laboratories and the National Cancer Institute (NCI) with an award for Best Use of AI (artificial intelligence) for CANDLE (CANcer Distributed Learning Environment), a project focused on applying machine learning to personalized cancer medicine. Lab researchers garnered another prize for Best Use of HPC in Manufacturing for a collaboration with Lawrence Berkeley National Laboratory (LBNL) aimed at using advanced simulation and modeling to help paper companies cut papermaking costs and energy usage.

CANDLE, an Exascale Computing Project effort, utilizes DOE’s supercomputers (including LLNL’s Ray system and eventually Sierra) to accelerate cancer research and treatment by building a deep learning neural network toolkit. The code would be applied to the three pilot projects spawned by a partnership between DOE and NCI -- part of the National Institutes of Health (NIH) -- that seeks to speed up development of precision cancer medicine. The projects include using machine learning to create models of tumor/drug interactions to predict the effectiveness of potential cancer drugs, improving the understanding how cancer propagates through RAS protein interactions (mutations in RAS genes are linked to up to 30 percent of all cancers), and analyzing cancer patient data to predict outcomes and create personalized treatments.

CANDLE involves collaborators at Los Alamos, Oak Ridge and Argonne national laboratories; NCI’s Frederick National Laboratory for Cancer Research (FNLCR); and the U.S. Department of Veterans Affairs. It is led by Argonne Associate Lab Director for Computing Rick Stevens, along with co-principal investigators Georgia Tourassi at Oak Ridge and LLNL Chief Computational Scientist Fred Streitz, who also heads the RAS protein pilot project. LLNL’s project manager for CANDLE is Brian Van Essen.

"It’s exciting to recognize the impact that machine learning, deep learning and artificial intelligence can have on the scientific community," Van Essen said of the award. "The ability for us to have an impact on medicine and predictive oncology is exciting."

Other LLNL scientists contributing to CANDLE include David Hysom, Sam Jacobs and Piyush Karande and pilot project researchers Jonathan Allen, Ya Ju Fan, Stewart He, Timo Bremer and Harsh Bhatia. LLNL researchers Helgi Ingolfsson and Tim Carpenter created the data the team is using.

The second HPCwire Editor’s Choice award recognized an HPC for Manufacturing (HPC4Mfg) collaboration between LLNL and LBNL, which leveraged DOE’s advanced supercomputing capabilities to explore more energy efficient and less expensive methods for making paper. The complex models developed for the project targeted "wet-pressing," the stage in the paper-manufacturing process where water is removed from the wood pulp before it is sent through a drying process.

The project is co-led by LLNL scientist Yue Hao, who said manufacturers would save up to 20 percent of the energy used in the drying -- up to 80 trillion BTU's (thermal energy units) per year -- and as much as $250 million annually if the team could increase the paper's dryness during the wet-pressing stage by 10-15 percent. The projections are in line with the goals of the Agenda 2020 Technology Alliance, a consortium of paper manufacturers that seek to reduce their energy usage by 20 percent by 2020. The results would not have been achievable without DOE’s advanced supercomputers.

"It is great to know that our work is recognized and acknowledged," Hao said. "This HPCwire Editor’s Choice award not only recognizes our teamwork and accomplishments for this project, but also reaffirms the importance of our ongoing HPC4Mfg research efforts."

The project was a seedling for the Department of Energy's HPC4Mfg initiative, a multi-lab effort to use high-performance computing to address complex challenges in American manufacturing. It was launched in 2015.

"This is a really nice recognition of the program and I’m very excited to see that the projects that we are doing are being recognized not only as being impactful to industry, but also as being innovative in terms of how we’re using high-performance computing," said Lori Diachin, director of the HPC4Mfg program and deputy associate director for Science and Technology in LLNL’s Computation Directorate.

The project was a prime example of a true HPC4Mfg collaboration, Diachin explained, because it required the cooperation of two laboratories to leverage codes at two different scales. Lawrence Berkeley ran simulations on pore-scale structures in the press felts, the output of which was used on a continuum scale in a code from Lawrence Livermore integrating mechanical deformation and two-phase flow models.

"Together that moved the whole understanding of the paper-drying process forward," Daichin said.

The simulations required the use of more than 15 million CPU hours at the Berkeley Lab’s National Energy Research Scientific Computing Center (NERSC), according to David Trebotich, a computational scientist at LBNL and co-principal investigator on the project.

The DOE's Advanced Manufacturing Office within the Energy Efficiency and Renewable Energy Office funded the project. Other researchers included Wei Wang of LLNL and Jun Xu and David Turpin of Agenda 2020.

Contact

Jeremy Thomas

Jeremy Thomas

[email protected]

(925) 422-5539

Related Links

HPC4mfg"LLNL and LBNL researchers explore more energy-efficient solutions for paper industry"

Science and Technology Review

BAASIC

CANDLE

Tags

HPC, Simulation, and Data ScienceComputing

Featured Articles